Top 10 Business Directories of USA: Best Platforms for Local Visibility in 2026

Generating summary...

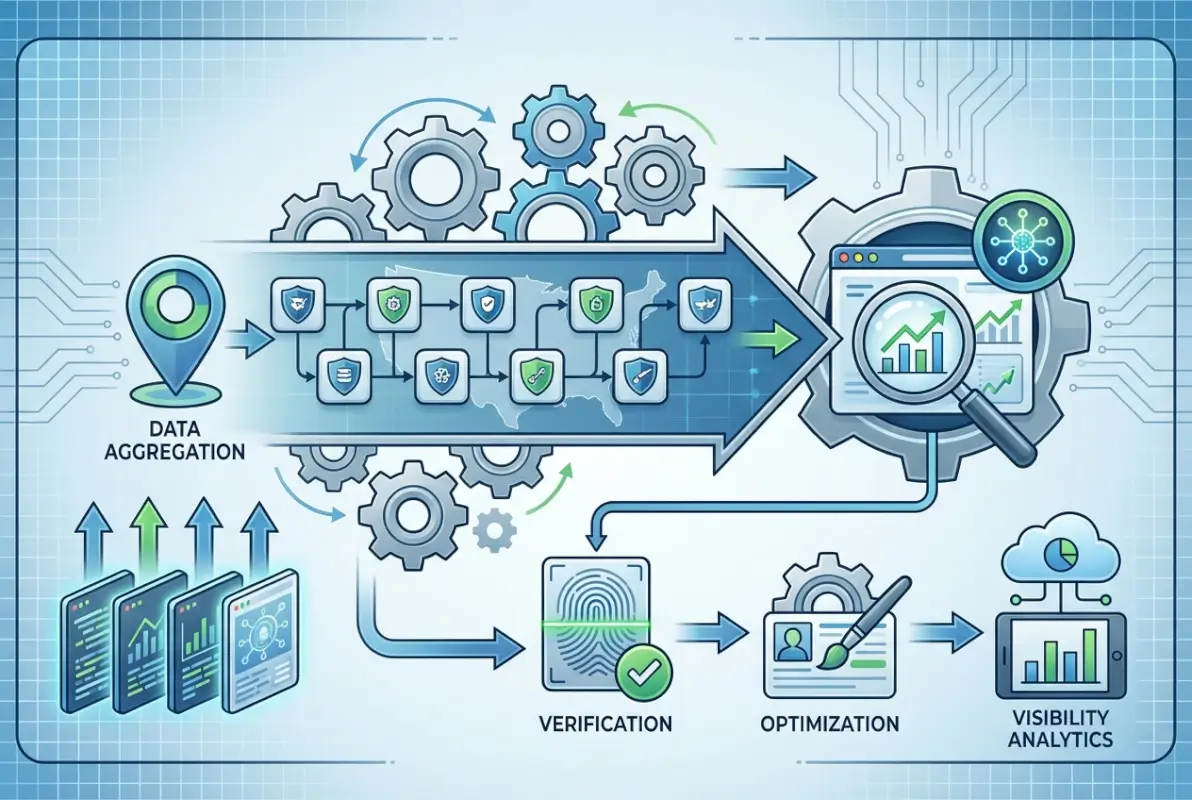

Most business owners pour thousands into ads and SEO, completely missing a simpler play that’s hiding in plain sight. Top 10 business directories of USA aren’t just digital phone books—they’re discovery engines that drive 76% of local mobile searches to physical stores within 24 hours. While your competitors fight over Google’s first page, strategic directory listings create multiple front doors to your business, each validated by platforms consumers already trust.

Here’s what the “list everywhere” crowd won’t tell you: dumping your business on 200 directories does nothing. Worse, inconsistent information across low-authority sites actively damages your local search rankings. The real opportunity? Ten high-impact directories, properly optimized, deliver more qualified leads than fifty mediocre listings ever will.

- Google Business Profile dominates – Controls 92% of local search discovery, making it your non-negotiable foundation

- Directory signals compound – Consistent NAP across authoritative platforms boosts local pack rankings by up to 70%

- Quality beats quantity – Ten optimized listings outperform 100 incomplete ones for both SEO and customer trust

- Industry directories convert higher – Niche platforms deliver 3-5x better conversion rates than general listings

- Active management matters – Listings with regular updates and review responses see 43% more customer inquiries

Why Directories Drive Discovery in 2026

Search behavior shifted dramatically. Consumers don’t just Google anymore—they start on maps, ask AI assistants, and trust platform-specific reviews before making contact. Business directories serve as validated entry points across this fragmented discovery landscape.

The mechanics are straightforward. When your business appears consistently across Google Business Profile, Bing Places, and Apple Maps, search engines interpret this as credible confirmation of your existence and relevance. This validation directly influences whether you appear in local packs—those map-based results sitting above organic listings that capture 44% of all local search clicks.

Beyond search algorithms, directories provide third-party credibility. A Better Business Bureau listing or industry association membership displayed alongside your business information acts as implicit endorsement. For service businesses especially, this trust signal often determines whether a prospect contacts you or moves to the next option.

The multiplier effect matters more than most realize. Each quality directory listing creates another pathway for discovery, spreading your digital footprint without corresponding increases in ad spend. A plumbing company I worked with saw this firsthand—after properly claiming and optimizing their top eight directory profiles, they cut paid advertising by 60% while maintaining lead volume. The directories were doing the heavy lifting they’d been paying Google Ads to handle.

Top 10 Business Directories of USA for 2026

Not all directories deliver equal value. The platforms below represent the highest-impact opportunities for U.S. businesses based on traffic, authority, and conversion potential. Each serves a specific function in your overall visibility strategy.

1. Google Business Profile

Google Business Profile isn’t optional—it’s the foundation everything else builds on. With over 5 billion monthly interactions and direct integration into Google Search and Maps, GBP determines whether you appear in local packs, knowledge panels, and map results. According to Google’s official business listing guidance, complete profiles receive 7x more clicks than incomplete ones.

Optimization requires more than filling out fields. Regular posts about offers, events, or updates signal active management to Google’s algorithm. High-quality photos—especially of your team, location interior, and work examples—increase engagement by 42%. Customer questions answered promptly in the Q&A section reduce friction and demonstrate responsiveness.

The insights dashboard reveals exactly how customers find you: direct searches for your business name, discovery searches for your category, and map views. This data shows whether brand awareness or category visibility needs more attention, directing where to focus effort.

2. Bing Places for Business

Dismissing Bing is leaving money on the table. Microsoft’s search engine powers 36% of U.S. desktop searches and skews toward older, higher-income demographics—exactly the audience many service businesses target. Better yet, competition for visibility on Bing remains lower than Google, making top positions more achievable.

Bing Places syncs with Apple Maps and other platforms through data aggregators, amplifying your listing’s reach beyond Bing’s own traffic. The setup process mirrors Google Business Profile, so if you’ve optimized GBP, Bing Places requires minimal additional effort. Check Bing’s business listing platform for their current optimization recommendations.

3. Apple Maps Connect

Apple Maps quietly dominates mobile navigation for iOS users—roughly 45% of U.S. smartphone owners. When iPhone users search for businesses or ask Siri for recommendations, Apple Maps provides the answers. Missing from this platform means invisible to nearly half of mobile searchers.

Apple’s quality standards run higher than most directories. They verify business information more aggressively and remove listings with inconsistent data. This stricter approach actually benefits businesses that maintain accurate profiles—you’re competing against fewer half-completed listings.

4. Yelp

Despite controversies, Yelp’s 178 million monthly visitors make it unmissable for consumer-facing businesses. Restaurants, home services, healthcare, and retail see the strongest results, with Yelp reviews often appearing in Google search results even when users aren’t on Yelp directly.

Active engagement matters more on Yelp than passive listing. Responding to reviews—both positive and negative—signals to potential customers that you care about feedback. The platform’s Request a Quote feature for service businesses creates direct lead generation opportunities beyond simple discovery.

Yelp’s algorithm favors businesses with consistent review velocity over those with many old reviews. A restaurant with 50 reviews in the past six months outranks one with 200 reviews from three years ago. This rewards ongoing customer engagement rather than past performance.

5. Facebook Business Page

Facebook’s 2.9 billion users include your customers, regardless of your industry. The platform functions as both social network and business directory, with search capabilities and review systems that influence local discovery. Facebook’s integration with Instagram extends your reach across Meta’s ecosystem.

The platform’s check-in feature and location tagging create organic visibility when customers visit your business. Each tagged post appears in their friends’ feeds, generating social proof and awareness that traditional directories can’t match. For businesses with physical locations, this ambient marketing compounds over time.

6. Better Business Bureau

BBB’s century of trust-building translates directly into consumer confidence. The platform attracts 127 million annual visitors actively researching business reliability before making purchases. For industries where trust is paramount—home services, auto repair, financial services—BBB accreditation can be the deciding factor in winning bids.

The BBB rating system provides standardized credibility signals consumers understand. While accreditation requires payment and adherence to standards, even a basic listing with complaint resolution history demonstrates transparency that builds trust. According to research from the Better Business Bureau, 88% of consumers trust BBB-accredited businesses more than non-accredited alternatives.

7. Yellow Pages (YP.com)

YP.com successfully transitioned from print to digital while maintaining brand recognition, especially among older demographics. The platform sees 60 million monthly visitors who actively search by category—people who know what service they need but haven’t selected a provider yet.

Enhanced listings on YP.com allow detailed service descriptions, multiple categories, and visual galleries. The platform’s categorical structure makes it particularly effective for specialized services where consumers search by need rather than business name.

8. Angi (formerly Angie’s List)

Angi dominates home services discovery with 30 million active users specifically seeking contractors, repair services, and home improvement professionals. The platform’s verification processes and detailed review system create a high-trust environment that converts well—users on Angi are further down the decision funnel than casual browsers.

The project request feature generates direct leads by matching homeowner needs with qualified service providers. While this lead generation model involves per-lead costs, the qualified nature of these inquiries typically produces higher conversion rates than general directory traffic.

9. Chamber of Commerce Directories

Local Chamber membership provides directory listing plus networking opportunities and community credibility. Many consumers specifically seek Chamber-affiliated businesses, viewing membership as evidence of community investment and business stability.

Chamber directories typically have strong local SEO value due to their .org domains and community focus. For businesses serving specific geographic areas, these locally-focused listings often outperform broader platforms for geographic search queries.

10. Industry-Specific Directories

Niche directories deliver the highest conversion rates by connecting you with precisely targeted audiences. Healthgrades for medical providers, Avvo for attorneys, TripAdvisor for hospitality, and Zillow for real estate all serve audiences actively seeking those specific services.

For businesses running their own directories or considering white-label solutions, platforms like TurnKey Directories (turnkeydirectories.com) provide WordPress-based tools to create industry-specific directories that can become valuable assets in their own right.

| Directory | Monthly Traffic | Best For | Key Advantage |

|---|---|---|---|

| Google Business Profile | 5B+ | All local businesses | Local pack dominance |

| Bing Places | 1B+ | Desktop users, B2B | Lower competition |

| Yelp | 178M | Restaurants, services | Review authority |

| BBB | 127M | Trust-critical industries | Credibility signal |

| Angi | 30M | Home services | Qualified leads |

Optimization Strategies for Maximum Impact

Creating listings is step one. Optimization determines whether they actually drive results. The difference between a bare-bones listing and an optimized profile can mean 10x variation in leads generated.

NAP Consistency Is Non-Negotiable

Name, Address, Phone number (NAP) must match exactly across every platform. Not similar—identical. “123 Main Street” on one directory and “123 Main St.” on another confuses search engines and dilutes your citation power. Choose one format and replicate it everywhere.

This extends to phone numbers (use the same number across all platforms) and business name (including or excluding LLC, Inc., etc. consistently). According to Whitespark’s local search ranking factors research, NAP consistency accounts for 15% of local pack ranking factors.

Complete Every Available Field

Partial profiles perform poorly. Complete profiles signal to both algorithms and consumers that you’re an active, established business. Business hours, service areas, payment methods, accessibility features, parking information—fill everything the platform offers.

Categories deserve special attention. Select your primary category carefully (it has the most weight), then add all relevant secondary categories. A restaurant might be “Italian Restaurant” primarily, but also “Pizza Restaurant,” “Wine Bar,” and “Catering Service” if applicable.

Visual Content Drives Engagement

Listings with photos receive 42% more direction requests and 35% more website clicks than those without. But not just any photos—invest in professional images of your location exterior and interior, team members, products, and work examples.

Google Business Profile specifically recommends uploading at least three photos: logo, cover photo, and additional images. For restaurants, menu photos are essential. For service businesses, before/after examples prove capability.

Regular Updates Signal Active Management

Static listings decline in performance over time. Platforms like Google Business Profile favor businesses that post regularly—weekly is ideal. Posts about offers, events, products, or simple updates keep your listing fresh in algorithms and in customer feeds.

Seasonal updates matter too. Adjust hours for holidays, update photos to reflect current season, refresh service descriptions to highlight timely offerings. A landscaping company should emphasize different services in spring versus fall.

Review Management Across Platforms

Reviews make or break directory effectiveness. They influence both search rankings and consumer decisions, with 87% of consumers reading online reviews before visiting local businesses. The challenge isn’t getting reviews—it’s managing them strategically across multiple platforms.

The Response Strategy

Responding to reviews does three things: shows potential customers you’re engaged, provides context for negative feedback, and signals to algorithms that your listing is actively managed. Response rate and speed are ranking factors on Google Business Profile.

For positive reviews, keep responses personal but brief. Thank the customer, mention something specific from their review, and invite them back. For negative reviews, respond professionally within 24 hours, acknowledge the issue, offer to make it right offline, and provide contact information.

I watched a dental practice transform their reputation by simply responding thoughtfully to every review. Their average rating didn’t change much (they were already good), but their conversion rate from profile views to appointment requests jumped 31% because prospects saw the practice actively engaged with patient feedback.

Soliciting Reviews Ethically

Ask satisfied customers directly, immediately after positive interactions. Make it easy—send a text or email with direct links to your preferred platforms. Don’t offer incentives (most platforms prohibit this) and never write fake reviews.

Diversify review platforms. While Google reviews carry the most weight for local search, reviews on industry-specific directories (Healthgrades, Avvo, etc.) often influence decision-making more for consumers already on those platforms.

Measuring Directory ROI and Key Metrics

Without measurement, you’re guessing about what works. Directory performance requires tracking specific metrics that connect visibility to actual business outcomes.

Traffic and Engagement Metrics

Google Business Profile Insights shows how many people found your profile through discovery searches versus direct searches, how many viewed your photos, requested directions, clicked to your website, or called your business. This data reveals which actions your profile drives most effectively.

For other directories, use UTM parameters in your website links to track traffic sources in Google Analytics. Create unique tracking URLs for each directory (yoursite.com?utm_source=yelp&utm_medium=listing) to see exactly which platforms send visitors.

Lead Attribution

Call tracking numbers unique to each directory show which platforms generate phone inquiries. Services like CallRail or CallTrackingMetrics provide directory-specific numbers that forward to your main line while tracking the source.

Form submissions tracked through your CRM or analytics platform reveal which directories send qualified leads versus tire-kickers. Conversion rate matters more than raw traffic—some directories send fewer visitors but higher-intent prospects.

Revenue Tracking

The ultimate measure is revenue per directory. Track new customers from discovery through purchase, noting which directory initiated contact. This closed-loop attribution shows true ROI and informs where to invest ongoing optimization effort.

According to research from Statista’s search engine market share data, businesses that track directory performance outperform those that don’t by an average of 23% in local search revenue.

Which business directory is most important for local SEO?

Google Business Profile is the most critical directory for local SEO, controlling the majority of local search results and map pack placements. It directly influences whether your business appears in Google’s local 3-pack and knowledge panels, making it the non-negotiable foundation for any local visibility strategy.

How many directories should I list my business on?

Focus on 10-15 high-authority directories rather than hundreds of low-quality listings. Prioritize Google Business Profile, Bing Places, Apple Maps, Yelp, and industry-specific directories relevant to your business. Quality and complete optimization of these core platforms delivers better results than thin presence across dozens of minor directories.

What is NAP consistency and why does it matter?

NAP consistency means your business Name, Address, and Phone number appear identically across all directories and platforms. Search engines use NAP data to verify business legitimacy and location. Inconsistent information confuses algorithms, dilutes citation value, and can harm local search rankings by up to 15% according to local ranking factor studies.

How long does it take to see results from directory listings?

Initial traffic from directories typically appears within 2-4 weeks of claiming and optimizing listings. Full SEO benefits, including improved local pack rankings, usually materialize over 2-3 months as search engines index and validate your citations. Immediate benefits like direct calls or direction requests can occur within days of optimization.

Should I use paid directory listings or free ones?

Start with free listings on major platforms like Google Business Profile, Bing Places, and Facebook. Invest in paid listings only on high-traffic, industry-specific directories where your target audience actively searches. Paid BBB accreditation or Angi enhanced listings can deliver ROI for trust-critical or home service businesses, but avoid paying for obscure directories.

How do I handle duplicate directory listings?

Search each directory for existing listings before creating new ones, using variations of your business name and phone number. When duplicates exist, claim and merge them through the platform’s support process. For unclaimed duplicates you can’t access, contact directory support directly to request removal or consolidation to prevent NAP inconsistency issues.

What information should I include in directory listings?

Include complete NAP, business hours, website URL, service areas, payment methods, accessibility features, detailed business description with relevant keywords, high-quality photos, and accurate categorization. Complete every available field—partial profiles perform significantly worse than comprehensive ones. Regular updates with posts, offers, and fresh photos further boost performance.

Can business directories help with website SEO beyond local search?

Yes, directory listings create backlinks to your website from authoritative domains, which can improve overall domain authority and organic rankings. Citations also generate brand mentions and referral traffic. However, the primary benefit remains local search visibility—directory impact on broader organic SEO is secondary to their local ranking influence.

Your Next Steps: Claim, Optimize, Measure

Directory strategy isn’t complicated, but it requires systematic execution. Start with the big five—Google Business Profile, Bing Places, Apple Maps, Yelp, and Facebook. Claim each listing, verify ownership, and spend the time to complete every field thoroughly.

Once your foundation listings are complete, add industry-specific directories where your customers actually search. A medical practice needs Healthgrades more than Yellow Pages. A contractor needs Angi more than LinkedIn.

The businesses winning local search aren’t doing anything magical. They’re simply maintaining consistent, complete, actively-managed directory profiles across the platforms that matter. They respond to reviews. They update information seasonally. They measure what works and double down on high-performing directories.

Block Two Hours This Week

Your competitors are claiming directory visibility while you read this. Don’t let them capture customers who should be finding you.

Audit your current directory presence—search your business name and see what appears. Claim unclaimed listings, fix inconsistent NAP information, and complete at least your Google Business Profile fully. Those two hours could generate qualified leads for months to come.

Was this article helpful?