Best White Label Business Directory Software: 5 Solutions Compared (2025 Guide)

Choosing white label business directory software isn’t just about finding a platform with the most features—it’s about selecting a solution that aligns perfectly with your business model, technical capabilities, and growth trajectory. After years of implementing directory solutions for clients (and making costly mistakes with my first directory project), I’ve learned that the flashiest platforms often create the biggest headaches down the line.

The truth most articles won’t tell you? The best white label directory software for your needs might actually be the one with fewer features but better execution. I’ve watched clients struggle with feature-rich platforms that looked impressive in demos but became technical nightmares during daily operations. Meanwhile, others have built six-figure directories on simpler platforms that just worked.

What separates successful directory owners from those who abandon their projects isn’t the software they choose—it’s understanding which features actually matter for their specific use case. A local business directory has fundamentally different requirements than a B2B services marketplace, yet most comparison articles treat all directories the same way.

TL;DR – Quick Takeaways

- White label directory software lets you launch custom-branded directories without coding expertise or custom development costs

- Top solutions for 2025 include TurnKey Directories (best WordPress scalability), eDirectory (enterprise features), Brilliant Directories (easiest for beginners), ListingPro (WordPress flexibility), and Directory Engine (location-focused)

- Prioritize these features: scalability to 10,000+ listings, SEO automation (schema markup, sitemaps), multiple monetization models, and responsive mobile design

- Cost reality: One-time purchases ($150-$600) often beat subscription models ($50-$500/month) for long-term ownership, but factor in hosting and support

- Test before committing: Upload 50+ sample listings during trials to evaluate real-world performance, not just demo experiences

Understanding White Label Directory Software in 2025

White label business directory software provides a complete, ready-made platform that you can rebrand and customize under your own company name. Think of it as buying a fully-equipped restaurant that’s ready to operate—you put your name on the door, choose the decor, and adjust the menu without building the kitchen from scratch.

These platforms handle the complex technical infrastructure (user registration, listing management, search functionality, payment processing) while giving you control over branding, design, and business logic. The difference from custom development? You’re deploying in weeks instead of months, and spending hundreds instead of tens of thousands of dollars.

According to the U.S. Census Bureau’s County Business Patterns, there are over 33 million businesses in the United States alone. Even capturing a tiny niche of this market with a specialized directory can generate substantial revenue through listing fees, featured placements, and advertising.

The market has evolved significantly. Early white label solutions offered basic listing functionality with minimal customization. Modern platforms now include AI-powered categorization, automated SEO optimization, mobile apps, and sophisticated monetization tools. The challenge isn’t finding a platform—it’s finding one that matches your specific requirements without unnecessary complexity.

Why Businesses Choose White Label Solutions

The appeal comes down to three core advantages: speed, cost, and reduced technical risk. I’ve seen clients launch comprehensive directories in under two weeks using white label platforms—something that would take 3-6 months with custom development.

Cost savings are equally dramatic. Custom directory development typically starts at $50,000 for basic functionality and can exceed $150,000 for enterprise features. White label solutions deliver comparable functionality for $150-$2,000 initially, with ongoing costs ranging from $0-$500 monthly depending on your hosting and support choices.

The hidden benefit? Risk reduction. With white label software, you’re using proven technology that’s been tested by thousands of users. Bugs have been found and fixed, security vulnerabilities have been patched, and performance has been optimized. Custom development means you’re discovering all these issues yourself—an expensive and time-consuming process.

Branding control remains comprehensive despite using third-party technology. Modern white label platforms let you customize everything users see: domain names, color schemes, logos, page layouts, email templates, and even URL structures. Your users never need to know you’re using a platform provider.

Critical Features That Actually Matter

Not all directory features deliver equal value. After working with dozens of directory implementations, I’ve identified the features that separate successful directories from abandoned projects.

Scalability isn’t just about handling large numbers of listings—it’s about maintaining performance as you grow. Many platforms work beautifully with 100 listings but become painfully slow at 1,000. The best platforms handle 10,000+ listings without performance degradation, even on standard hosting plans.

SEO automation is non-negotiable. Your platform should automatically generate XML sitemaps, implement schema markup for local business listings, create SEO-friendly URLs, and handle canonical tags correctly. Manual SEO optimization for thousands of listings simply isn’t practical. Google’s structured data documentation provides the standards your platform should support out of the box.

Monetization flexibility determines your revenue potential. Look for platforms supporting multiple income streams: premium listing tiers, featured placements, banner advertising, lead generation forms, subscription memberships, and affiliate integrations. The most successful directories combine 3-4 revenue models rather than relying on a single income source.

| Feature Category | Must-Have | Nice-to-Have | Usually Unnecessary |

|---|---|---|---|

| Scalability | 10,000+ listings | 50,000+ listings | Unlimited listings |

| SEO Tools | Schema markup, sitemaps | Automated meta descriptions | Built-in link building |

| Mobile Experience | Responsive design | PWA capabilities | Native mobile apps |

| Monetization | Paid listings, featured spots | Membership tiers, ads | Affiliate marketplace |

| Admin Tools | Bulk editing, analytics | Automated moderation | AI content generation |

Advanced Features Worth Considering

Mobile optimization has evolved from optional to critical. Over 60% of directory searches now happen on mobile devices, and Google’s mobile-first indexing means your mobile experience directly affects search rankings. Your platform should deliver fast-loading, touch-friendly experiences without requiring separate mobile development.

Integration capabilities extend your directory’s functionality without custom coding. The ability to connect with payment processors (Stripe, PayPal), email marketing platforms (Mailchimp, ConvertKit), CRM systems, and mapping services (Google Maps, Mapbox) creates professional experiences that would otherwise require extensive development.

Analytics and reporting tools provide the insights needed for data-driven decisions. Look for platforms offering visitor behavior tracking, listing performance metrics, revenue analytics, and conversion funnel analysis. These insights help you optimize your business model and marketing strategy based on actual user behavior rather than assumptions.

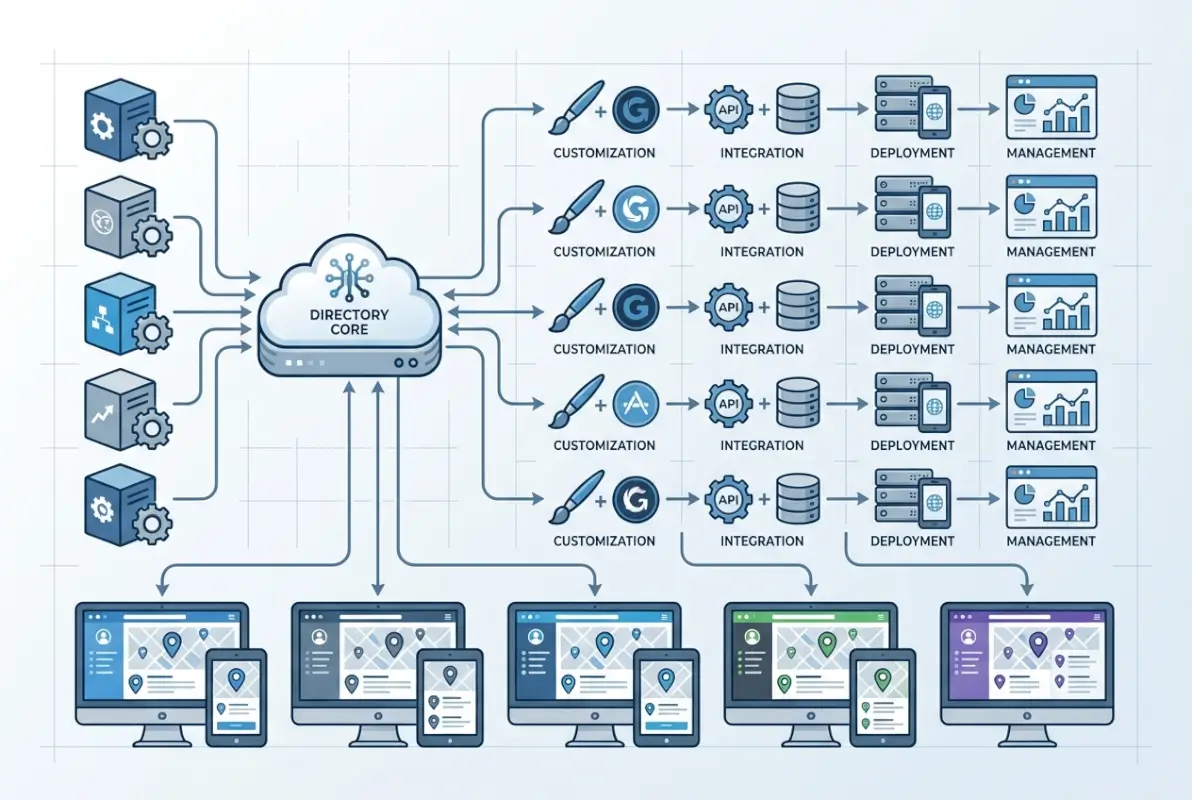

Top 5 White Label Business Directory Software Solutions

After evaluating dozens of platforms and implementing solutions for various clients, these five solutions stand out for different use cases. The “best” platform depends entirely on your specific requirements, technical comfort level, and growth plans.

1. TurnKey Directories – Best for WordPress Users Seeking Performance

TurnKey Directories has revolutionized the WordPress directory space by solving the scalability problem that plagued earlier solutions. Where most WordPress directories slow to a crawl beyond 1,000 listings, TurnKey maintains performance up to 50,000 listings through innovative virtual page technology.

The platform’s standout feature is its comprehensive SEO automation. It automatically generates sitemaps, implements schema markup for business listings (Name, Address, Phone), and even creates featured images using Google Street View integration. This automation saves hundreds of hours compared to manual optimization.

Monetization options include six built-in revenue models: display ads within listings, affiliate marketing integration, a “Rank & Rent” model for premium positions, lead generation forms, sponsored search results, and a claim listing feature that lets businesses pay to control their information.

Pricing follows a one-time purchase model between $299-$599 depending on features needed, with no recurring subscriptions. This includes lifetime updates and community support—a refreshing change from the subscription fatigue affecting most SaaS solutions.

Having worked with clients who migrated to TurnKey Directories from other platforms, the performance difference is remarkable. One client went from 4-second page loads with 800 listings on their previous platform to under 1 second with 5,000 listings on TurnKey. The administrative overhead dropped dramatically as well, with automated features handling tasks that previously required manual intervention.

2. eDirectory – Best for Enterprise-Level Features

eDirectory positions itself as the comprehensive solution for serious directory businesses. The platform offers extensive customization options, multiple directory types (business listings, classifieds, real estate, review sites), and sophisticated monetization tools.

Key strengths include powerful SEO capabilities beyond basic optimization—detailed SEO settings for each listing, automated schema implementation, and granular control over indexing. The platform excels at monetization with support for recurring subscriptions, featured listings, banner advertising, deal promotions, and commission-based transactions.

Pricing starts at $499 monthly for cloud hosting or approximately $1,495 for a one-time license purchase. While this places eDirectory at the premium end of the market, the feature set justifies the investment for businesses with serious revenue goals.

The learning curve is steeper than some alternatives—expect to invest time mastering the platform’s extensive capabilities. However, clients using eDirectory consistently report that the initial investment pays off through the platform’s scalability and revenue optimization tools.

3. Brilliant Directories – Best for Non-Technical Users

Brilliant Directories has earned its reputation as the most user-friendly option, with a genuine “no coding required” approach that dramatically lowers the barrier to entry. The drag-and-drop builder, intuitive interface, and guided setup process make it ideal for entrepreneurs without technical backgrounds.

The platform shines in community-building features: integrated messaging systems, member directories, content restriction capabilities, and membership management tools. These features enable private communities and membership sites alongside traditional directory functionality.

Pricing starts at approximately $195 monthly with all-inclusive hosting, updates, and support bundled together—eliminating surprise costs. The higher tier plans ($297-$497 monthly) add advanced features like custom domains, API access, and priority support.

Customization options are more limited compared to WordPress-based solutions or eDirectory, but for most directory owners, the available options strike a good balance between flexibility and usability. I’ve watched non-technical clients launch successful directories within two weeks using Brilliant Directories—something that would be nearly impossible with more complex platforms.

4. ListingPro – Best for WordPress Flexibility

ListingPro combines powerful directory features with the familiar WordPress ecosystem, providing access to thousands of plugins and themes for nearly unlimited extension possibilities. This integration appeals to users already comfortable with WordPress administration.

Standout features include an advanced review and rating system, claim listing functionality allowing businesses to manage their own profiles, robust location-based search, and a well-designed frontend submission system. The platform handles the most common directory use cases without requiring additional plugins.

Pricing operates on a one-time purchase model starting at approximately $149 for a single site license, making it one of the most cost-effective long-term options. However, this doesn’t include hosting costs, and some advanced features require premium add-ons that increase the total investment.

The WordPress foundation creates both advantages and challenges. Flexibility and familiarity are major benefits, but performance optimization requires more attention than standalone platforms. Directories built with ListingPro often benefit from specialized WordPress hosting to maintain speed as they scale beyond a few hundred listings.

5. Directory Engine – Best for Location-Based Directories

Directory Engine specializes in geographical and location-based directories, making it particularly well-suited for local business directories, tourism guides, city portals, and regional service provider listings. This specialization means superior mapping and location features compared to general-purpose platforms.

Key features include advanced mapping integration with multiple providers, geo-location services that automatically detect user locations, detailed proximity search capabilities, and strong taxonomy management for organizing complex category structures.

Pricing typically starts around $199 for a single site license, with additional costs for extended support and updates. The platform’s modular pricing—separating core functionality from add-ons—lets you invest only in features you actually need rather than paying for unused capabilities.

While Directory Engine may not offer the breadth of features found in all-in-one solutions, its specialization in geographical directories creates clear advantages for location-focused businesses. The development team has consistently improved mapping capabilities, staying ahead of trends in location-based search and discovery.

Choosing the Right Platform for Your Needs

Selecting the ideal white label directory software begins with honest assessment of your specific requirements, not comparison of feature lists. A platform’s success depends on how well it aligns with your business model, technical capabilities, and growth trajectory.

Start by defining your directory’s primary purpose and target audience. A real estate directory requires different functionality than a restaurant guide or B2B services marketplace. List your absolute must-have features separately from nice-to-have capabilities—this prevents feature creep from driving decisions.

Assess your technical comfort level honestly. Some platforms offer extensive customization but require HTML/CSS knowledge or comfort with WordPress administration. Others prioritize ease of use over flexibility. Neither approach is inherently better; it depends on your skills and willingness to learn technical systems.

The Testing Phase You Can’t Skip

Almost all quality directory platforms offer demos or trial periods. Failing to thoroughly test before committing is the most common mistake I see directory owners make. Marketing materials and sales demonstrations showcase ideal scenarios—real-world use reveals limitations and frustrations.

During trials, upload at least 50 sample listings across multiple categories. Test the complete user journey from both administrator and visitor perspectives. Can users easily find listings? Is the submission process intuitive? How long does bulk editing take? These practical questions matter far more than feature checklists.

Pay special attention to the administrative interface—you’ll spend far more time managing listings than users will browsing them. An impressive frontend with a clunky backend creates daily frustration that compounds over time.

| Decision Factor | Questions to Ask | Why It Matters |

|---|---|---|

| Scalability | How many listings before performance degrades? | Prevents costly migrations as you grow |

| Total Cost | What’s the 3-year cost including all fees? | Hidden costs add up quickly |

| SEO Readiness | Does it auto-generate sitemaps and schema? | Determines organic traffic potential |

| Support Quality | What’s average response time for issues? | Critical when problems arise |

| Exit Strategy | Can I export all data if I switch platforms? | Prevents vendor lock-in |

Cost Considerations Beyond Sticker Price

Calculate total cost of ownership over three years, not just initial purchase or monthly subscription fees. Include hosting costs ($10-$200 monthly depending on performance requirements), SSL certificates, premium add-ons, extended support, and potential migration costs if you outgrow the platform.

One-time purchase platforms often appear cheaper initially but may require separate hosting, SSL certificates, and paid support contracts. Subscription platforms bundle these costs but create ongoing expenses that compound over time. Neither model is inherently better—it depends on your budget structure and long-term plans.

For example: A $299 one-time platform + $25/month hosting + $100/year SSL + $200/year support = approximately $944 in year one, $500 per year after. Compare this to a $195/month all-inclusive subscription = $2,340 annually. Over three years, the one-time purchase saves roughly $5,476, but requires managing separate services.

Implementation Best Practices

Even the best white label directory software requires thoughtful implementation to succeed. The platform provides tools; your strategy determines results.

Start with a clear content strategy before adding listings. Define your category structure, listing fields, and quality standards. Inconsistent categorization and incomplete listings undermine user trust and search rankings. It’s tempting to add hundreds of listings quickly, but 50 high-quality, well-categorized listings outperform 500 inconsistent ones.

Prioritize SEO from day one. Configure schema markup, submit your sitemap to Google Search Console, optimize category page content, and ensure clean URL structures. According to Google’s local business structured data guidelines, properly implemented markup significantly improves visibility in local search results.

Monetization Strategy

Don’t try to monetize everything immediately. Start with one or two revenue streams, prove they work, then gradually add others. Common successful progressions include: free listings to build inventory → premium featured listings → banner advertising → membership tiers.

The most successful directories I’ve worked with follow a pattern: launch with 100% free listings to build inventory and user base, introduce paid featured listings once you have 200+ free listings, add banner advertising when you reach 10,000+ monthly visitors, then introduce membership tiers for businesses wanting ongoing visibility.

Test pricing carefully. Start higher than you think necessary—it’s easier to lower prices than raise them. Many directory owners undervalue their platform, charging $20/month for featured listings when the market would easily support $50-$100. Run small A/B tests with different pricing tiers to find the sweet spot.

Growth and Scaling

Plan for growth from the beginning. Choose a platform that can handle 10x your current needs without requiring migration. Platform migrations are expensive, time-consuming, and risk SEO penalties that can take months to recover from.

Monitor performance metrics religiously: page load times, bounce rates, listing submission completion rates, and conversion rates for paid upgrades. These indicators reveal problems before they become crises. A gradual increase in bounce rate often signals performance issues from database bloat or inefficient queries.

As your directory grows, invest in professional hosting if you haven’t already. The $10/month shared hosting that works fine for 100 listings will crater under 5,000 listings. Specialized WordPress hosting or VPS solutions maintain performance as you scale.

Frequently Asked Questions

What is white label business directory software?

White label business directory software is a pre-built, customizable platform that lets you create and launch directory websites under your own brand name. These solutions provide complete infrastructure for managing listings, users, and monetization while allowing you to customize appearance and functionality to match your brand without visible references to the original software provider.

How much does white label directory software cost?

Pricing varies significantly based on features and licensing models. Subscription platforms typically range from $50 to $500 monthly, while one-time purchase options cost between $150 and $2,000. When calculating total investment, include hosting ($10-$200/month), SSL certificates, support contracts, and premium add-ons. The least expensive option isn’t always most cost-effective long-term.

Can I customize white label directory software completely?

Customization capabilities vary between platforms. Most solutions allow you to customize branding elements (colors, logos, fonts), listing fields, categories, page layouts, and monetization options. Advanced platforms may offer API access or custom code integration for deeper customization. However, there’s typically a tradeoff between extreme customization and ease of implementation.

Which white label directory platform is best for WordPress users?

TurnKey Directories and ListingPro are both excellent WordPress-native solutions. TurnKey Directories excels in scalability (supporting 50,000+ listings) and offers superior performance optimization with one-time pricing. ListingPro provides extensive theme compatibility and plugin integration. Both eliminate the learning curve if you’re already familiar with WordPress administration.

Is white label directory software SEO-friendly?

Quality directory platforms include essential SEO features like customizable meta fields, clean URLs, XML sitemaps, and mobile optimization. However, SEO capabilities vary significantly. The most SEO-friendly platforms offer automatic schema markup implementation, canonical URL support, and granular indexing controls. Always verify that platforms support contemporary SEO best practices before committing.

How long does it take to launch a directory with white label software?

With white label solutions, you can launch a functional directory in 1-3 weeks depending on your preparation and the platform’s complexity. This includes setup, branding customization, initial content creation, and testing. Custom development typically requires 3-6 months for comparable functionality, making white label solutions 90% faster to market.

Can white label directory software handle multiple revenue streams?

Most premium platforms support multiple monetization methods including paid listings, featured placements, membership subscriptions, banner advertising, lead generation, and affiliate marketing. The best platforms like TurnKey Directories and eDirectory offer six or more built-in revenue options. Ensure your chosen platform natively supports your intended business model to avoid costly custom development.

What’s the difference between white label software and custom development?

White label software is pre-built and ready to deploy with customization options, typically costing $150-$2,000 initially with rapid implementation (days to weeks). Custom development builds a directory specifically for your needs, costing $50,000+ with 3-6 month timelines. White label offers 80-90% cost savings but less extreme customization; custom provides complete control at significantly higher investment.

How do I migrate from one directory platform to another?

Migration requires careful planning to preserve SEO value and user data. Export all listings, user accounts, and content from your current platform. Map fields to your new platform’s structure, import data in stages, implement 301 redirects from old URLs to new ones, and resubmit your sitemap to search engines. Plan for 2-4 weeks of migration work plus 1-2 months for search rankings to stabilize.

What support should I expect from white label directory providers?

Support quality varies dramatically between providers. Look for platforms offering documentation, video tutorials, community forums, and direct support channels (email, chat, or phone). Premium platforms typically respond to support tickets within 24-48 hours. Read actual user reviews about support quality before committing, as marketing materials rarely reflect real-world support experiences.

Ready to Launch Your Directory?

The perfect directory platform isn’t about having the most features—it’s about finding the solution that aligns with your specific goals, technical comfort level, and growth ambitions. Success comes from matching the tool to your vision and executing consistently.

Next steps: Identify your top three requirements, test demo versions of platforms meeting those needs, and calculate 3-year total cost of ownership. The platform that balances your immediate needs with long-term scalability will emerge clearly from this process. Don’t let analysis paralysis delay your launch—an imperfect directory that exists beats a perfect one that never launches.