How to Download Business Directories Data: 5 Legal Sources & Compliance Workflows

Business directories data—complete with company names, addresses, phone numbers, industries, and contact details—is the fuel that powers modern sales, marketing, and market intelligence operations. Yet most organizations struggle not with finding sources, but with navigating the murky legal landscape, ensuring data quality, and building sustainable collection workflows that won’t land them in regulatory hot water.

Here’s what the generic guides won’t tell you: the difference between success and failure in acquiring business directories data isn’t about which scraping tool you use or which database you buy. It’s about understanding the compliance boundaries, recognizing when to license versus extract, and implementing quality controls that transform raw data into actionable intelligence. I’ve watched companies waste six figures on data that violated terms of service, only to face legal threats and have to delete everything.

The reality? Downloading business directories data in 2026 requires equal parts technical know-how, legal awareness, and operational discipline—and this guide will show you exactly how to balance all three.

TL;DR – Quick Takeaways

- Public data sources (government databases, open data portals) offer legal safety but limited freshness and coverage

- Licensed providers deliver comprehensive, compliant data but require ongoing subscription costs

- Web scraping can be legal and effective when you respect robots.txt, terms of service, and rate limits

- APIs provide the safest path to automated data collection with clear usage rights

- Data quality degrades 25-30% annually—validation and enrichment workflows are mandatory, not optional

- Compliance documentation protects your organization from regulatory and legal exposure

Source Landscape for Business Directory Data: What to Collect and Where to Get It

Before you download a single record, you need to understand what constitutes valuable business directories data and which sources align with your compliance posture and budget constraints. Not all directory data is created equal, and the source you choose fundamentally shapes your legal risk profile.

Business directory data typically includes Name, Address, Phone (NAP) as foundational elements, plus industry classifications (NAICS or SIC codes), employee counts, revenue estimates, website URLs, email addresses, operating hours, and sometimes review data or social media profiles. The depth and accuracy of these fields vary wildly across sources—government databases might offer authoritative NAP data but lack email contacts, while commercial providers include technographic details but charge premium prices.

I remember consulting for a B2B software company that scraped every free directory they could find, ending up with 200,000 records that had a 60% duplicate rate and 40% invalid phone numbers. They would have been better off with 20,000 high-quality licensed records. The lesson? Source selection determines data utility more than volume ever will.

Core Public Data Sources: Definitions, Coverage, and Typical Fields

Public data sources represent the safest legal territory for business directories data collection. Public data portals for authoritative directory data like Data.gov provide access to government-maintained business registries, often without licensing restrictions beyond attribution requirements.

Key public sources include:

- Secretary of State business registries: Every U.S. state maintains a searchable database of registered businesses, including corporation names, registered agents, addresses, and formation dates. Data freshness varies by state (some update weekly, others quarterly), and bulk download options exist in many jurisdictions.

- County and municipal databases: Local governments publish business license data, building permits, and tax registration records. These provide hyperlocal coverage but require aggregating across hundreds of jurisdictions.

- Industry-specific regulatory databases: The FDA maintains food facility registrations, the FCC tracks telecommunications providers, and similar agencies offer searchable directories within their regulatory domains.

- Open data initiatives: Many cities publish economic development datasets including business counts by ZIP code, industry breakdowns, and employer statistics.

The major limitation of public sources? They typically capture only businesses required to register or obtain licenses—sole proprietorships operating under personal names, informal service providers, and businesses in non-regulated industries often don’t appear. Coverage is comprehensive for incorporated entities but spotty for smaller operators.

Popular Paid/Licensed Providers vs. Free/Public Sources

Commercial data providers invest heavily in aggregating, cleaning, and enriching business information from multiple sources. They offer significantly broader coverage and more frequent updates than free alternatives, but at substantial cost.

| Source Type | Coverage | Update Frequency | Typical Cost | Licensing |

|---|---|---|---|---|

| Public/Government | Registered entities only | Quarterly to annually | Free | Public domain |

| Licensed Providers | Comprehensive multi-source | Weekly to monthly | $5,000-$50,000/year | Strict usage terms |

| Web Scraping | Depends on target sites | As often as you scrape | Infrastructure costs | Varies by site TOS |

Major commercial providers like ZoomInfo, Dun & Bradstreet, and Infogroup combine government records with proprietary data collection (phone verification, web crawling, surveys) to build comprehensive profiles. They typically restrict data resale, limit export volumes, and prohibit integration with competitor services in their contracts.

The decision matrix is straightforward: if you need immediate access to millions of validated records with contact-level details and can justify the subscription cost, licensed providers make sense. If you’re targeting a specific geography or industry where public records provide adequate coverage, or if you have technical resources to build scraping infrastructure, public sources and targeted extraction offer cost-effective alternatives.

Legal Compliance and Responsible Data Collection: Navigating the Minefield

The legal landscape for business directories data collection has grown increasingly complex as courts address web scraping cases and privacy regulations expand globally. What was acceptable five years ago may now violate terms of service or data protection laws, potentially exposing your organization to litigation and regulatory penalties.

Understanding where legal boundaries lie isn’t about finding loopholes—it’s about building sustainable data operations that won’t require costly pivots when regulations tighten or court precedents shift. The companies that succeed long-term are those that treat compliance as a competitive advantage rather than an obstacle.

Legal Landscape and Risk Factors: What You Can and Cannot Do

Web scraping legality remains a gray area in many jurisdictions, with recent court decisions providing some clarity but leaving significant ambiguity. According to legal considerations for web scraping and data extraction, the general principle is that scraping publicly accessible information is typically legal, but violating computer access laws or breaching contracts (terms of service) can create liability.

Key legal principles that govern data collection:

- Computer Fraud and Abuse Act (CFAA) limits: In the U.S., the CFAA prohibits accessing computers without authorization. Courts have generally held that scraping publicly accessible websites doesn’t violate the CFAA, but circumventing technical barriers (like login walls or CAPTCHAs) can trigger liability.

- Terms of Service enforceability: Website terms that explicitly prohibit scraping create a contractual obligation. Violating these terms may not result in criminal charges but can lead to civil lawsuits for breach of contract or trespass to chattels (when scraping causes server harm).

- Copyright and database rights: Facts themselves aren’t copyrightable, but compilations and presentation can be. Scraping raw factual data (company names, addresses) is generally safe, but copying entire website structures or creative elements creates infringement risk.

- Privacy regulations (GDPR, CCPA): These laws primarily govern personal data. Business directory information about companies is usually outside their scope, but contact details for individuals (named employees, personal email addresses) may trigger obligations for lawful processing basis, consent, and data subject rights.

I worked with a marketing agency that scraped LinkedIn for prospect data, violating LinkedIn’s terms of service. They received a cease-and-desist, had to delete all collected data, and nearly faced a lawsuit that would have cost more in legal fees than the data was worth. The lesson? Terms of service violations carry real consequences, even when the underlying activity might be technically legal.

Recommended Compliance Practices and Governance

Building a defensible data collection operation requires documented policies, technical safeguards, and organizational accountability. The goal is creating an audit trail that demonstrates reasonable efforts to comply with applicable laws and respect data source terms.

Essential compliance practices include:

- Maintain a data inventory: Document every data source, collection method, date acquired, and applicable terms or licenses. This becomes critical if you face regulatory audits or legal discovery.

- Implement usage policies: Define acceptable uses for collected data within your organization. Restrict access to those with legitimate business needs, and prohibit activities that violate source terms (like resale when prohibited).

- Create retention schedules: Data should be deleted when no longer needed for its original purpose. This reduces regulatory exposure and demonstrates data minimization principles.

- Document consent and lawful basis: For any personal data collected, record the legal basis for processing (legitimate interest, consent, contract necessity) as required under GDPR and similar frameworks.

- Establish vendor review processes: When purchasing data, require vendors to warrant they obtained it legally and have rights to sublicense it to you. Get these representations in writing.

According to compliance considerations for web crawlers and data collection, organizations should implement rate limiting, user agent identification, and server load monitoring to demonstrate respect for website infrastructure even when scraping is technically permitted.

When should you prefer APIs or licensed data over scraping? The answer depends on risk tolerance and use case. If the data will power mission-critical operations, if you plan to resell or publicly redistribute it, or if your organization has low risk tolerance for litigation, licensed sources with clear usage rights are worth the premium. For internal research, competitive intelligence, or one-time analysis projects, carefully executed scraping within legal boundaries can be appropriate.

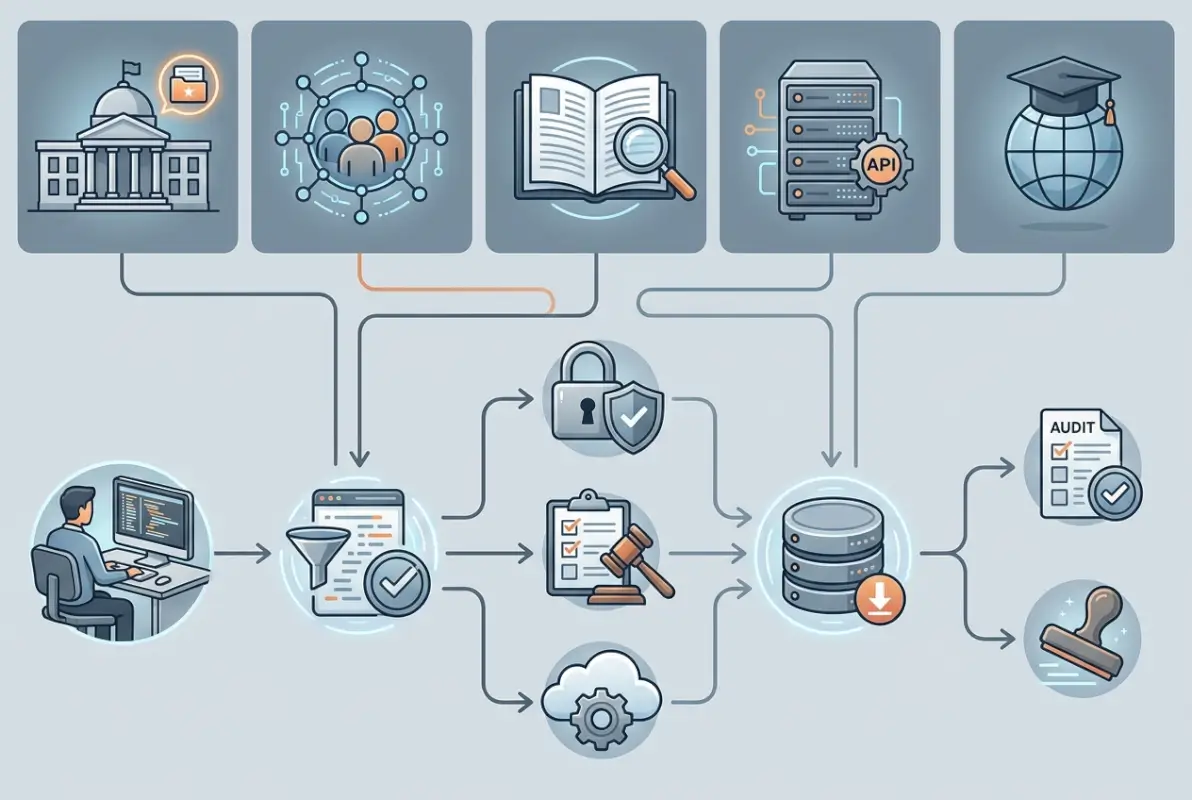

Practical Data Collection Workflows for 2026: Two Proven Approaches

Theory is worthless without execution, knowing where to find business directories data and understanding compliance requirements means nothing if you can’t translate that knowledge into repeatable workflows. The most successful data operations I’ve observed follow one of two paths: disciplined scraping with strong guardrails, or API-first licensing with systematic enrichment.

The workflow you choose should match your technical capabilities, budget constraints, and risk tolerance. Hybrid approaches—combining licensed baseline data with targeted scraping for specific gaps—often deliver optimal results for organizations with diverse data needs.

Workflow A: Public Data Scraping with Guardrails

Scraping public business directories requires balancing data acquisition speed with technical and legal safety measures. Rushing the process leads to IP blocks, corrupted data, and potential legal exposure. The disciplined approach takes longer upfront but produces sustainable, defensible results.

Step-by-step scraping workflow:

- Planning and target selection (Week 1): Identify specific directories to scrape, document their robots.txt policies and terms of service, and verify the data is publicly accessible without authentication. Create a spreadsheet logging each source, its allowable use, and scraping frequency limits.

- Infrastructure setup (Week 1-2): Configure proxies (residential or datacenter) to distribute requests and avoid IP blocking. Set up a database to store raw scraped data before cleaning. Implement logging to track success rates and errors.

- Scraper development and testing (Week 2-3): Build scrapers using tools like Octoparse for simple cases or custom Python scripts (Beautiful Soup, Scrapy) for complex sites. Test on small samples (50-100 records) before scaling to verify data extraction accuracy. Implement exponential backoff when receiving rate limit errors.

- Production scraping with rate limits (Week 3-4): Execute scraping with delays between requests (minimum 1-2 seconds, longer for smaller sites). Monitor for blocking or CAPTCHAs, and adjust request patterns if needed. Scrape during off-peak hours to minimize server load.

- Initial data cleaning (Week 4-5): Remove duplicate records based on fuzzy matching of company names and addresses. Validate email formats and phone number structures. Flag records with missing critical fields for manual review or deletion.

- Quality validation (Week 5-6): Sample random records and manually verify accuracy against original sources. Check for encoding issues (special characters corrupted) and structural problems (data in wrong fields). Establish baseline quality metrics (% valid emails, % complete records).

Common pitfalls to avoid: scraping too aggressively and triggering anti-bot measures, failing to handle pagination correctly and missing large portions of listings, not accounting for dynamic JavaScript content that requires browser automation, and neglecting to monitor for site structure changes that break scrapers.

I once built a scraper for state business registries that worked perfectly for three months, then suddenly started returning empty results. The site had added AJAX loading without changing the URL structure, a simple fix once identified but one that would have gone unnoticed without monitoring. Always implement alerts for unexpected data volume drops.

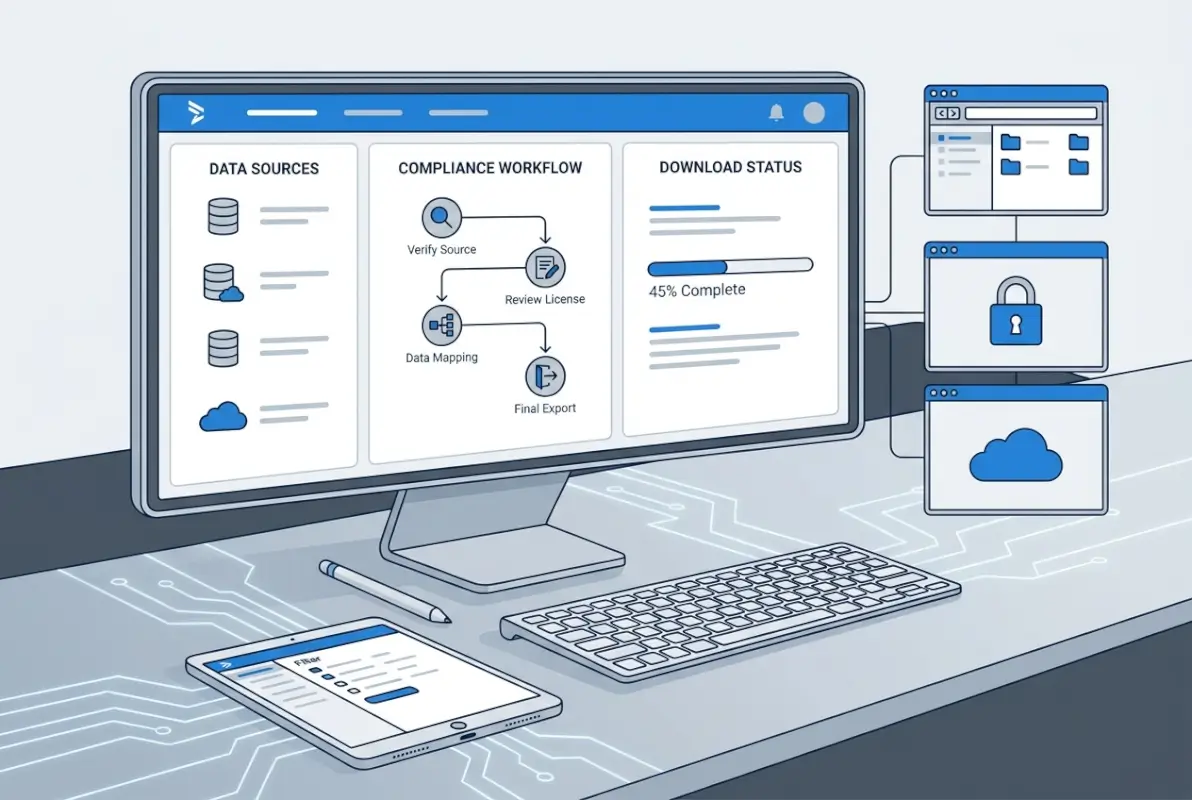

Workflow B: API-First Data Acquisition and Licensing

API-based data collection offers superior reliability and legal clarity compared to scraping, though at higher cost. Most commercial directory providers and some public sources now offer API access with clear rate limits and usage terms.

API workflow implementation:

- Evaluate and select API provider (Week 1): Compare coverage, data freshness, field completeness, pricing, and licensing terms across providers. Request trial access to verify data quality matches claims. For public APIs, review documentation for rate limits and authentication requirements.

- Authentication and access setup (Week 1): Obtain API keys and configure authentication (usually OAuth 2.0 or API key in headers). Set up secure credential storage (environment variables, secrets management) rather than hardcoding keys.

- Develop integration scripts (Week 2): Build request logic with proper error handling, rate limit respect (typically 10-100 requests per minute depending on provider), and retry mechanisms for transient failures. Implement pagination to retrieve complete result sets.

- Data synchronization schedule (Ongoing): APIs enable incremental updates rather than full refreshes. Query for records modified since last sync to capture changes efficiently. Schedule daily or weekly syncs depending on data change frequency and business needs.

- License compliance monitoring (Ongoing): Track usage against license limits (record counts, API calls, user seats). Set up alerts when approaching thresholds. Document how data is used to ensure compliance with permitted use cases.

- Multi-source enrichment (Week 3+): Combine API data from multiple sources to create comprehensive records. Use company identifiers (DUNS numbers, tax IDs) to match records across sources. Implement conflict resolution rules when sources disagree (e.g., trust most recently updated source).

APIs provide structured, consistent data formats (typically JSON or XML) that eliminate parsing challenges inherent in scraping. They also offer clear usage rights—your license agreement explicitly defines what you can and cannot do with the data, removing legal ambiguity.

The main constraint is cost, commercial APIs for business directories data typically charge per record, per user seat, or via annual subscriptions ranging from thousands to hundreds of thousands of dollars for comprehensive access. For organizations with budget constraints, combining free public APIs (SEC filings, Census data, local government business licenses) with selective scraping creates a cost-effective alternative.

Data Quality, Normalization, and Enrichment: Turning Raw Data into Intelligence

Raw business directories data is rarely usable in its initial state. Inconsistent formatting, duplicate records, missing fields, and outdated information plague datasets from every source, whether scraped or purchased. Organizations that skimp on quality processes end up with garbage-in-garbage-out analytics and failed outreach campaigns.

I’ve seen marketing teams blame their messaging or targeting when the real problem was 40% invalid email addresses and 30% wrong phone numbers in their database. Data quality isn’t a nice-to-have technical exercise—it directly determines ROI on every dollar spent using that data.

Data Quality Checks to Perform After Download

Systematic validation catches errors before they contaminate downstream processes. Implement these checks as automated scripts that run immediately after data acquisition, flagging or quarantining records that fail standards.

Critical validation rules include:

- NAP consistency verification: Check that addresses are complete (street, city, state, ZIP) and match USPS standards. Validate phone numbers follow national formatting (10 digits in U.S., appropriate country codes internationally). Ensure business names don’t contain obvious errors (multiple spaces, all caps, special characters).

- Email format validation: Verify email addresses match RFC standards (proper @ placement, valid characters, TLD exists). Go beyond regex by using email verification services that check if domains have MX records and whether mailboxes accept mail.

- Geographic coordinate accuracy: If latitude/longitude is included, verify coordinates fall within the stated city/state boundaries. Mismatched coordinates often indicate geo-coding errors or data entry mistakes.

- Industry classification consistency: Ensure NAICS or SIC codes are valid and match business descriptions. Watch for generic codes (like “999999” for unclassified) that indicate incomplete categorization.

- Temporal validation: Check that founding dates aren’t in the future, employee counts fall within reasonable ranges for claimed revenue, and last-updated timestamps are recent enough for your use case.

Normalization and Deduplication Strategies

Normalization transforms inconsistently formatted data into standardized structures that enable accurate analysis and matching. Deduplication eliminates redundant records that waste storage and create confusion about which version represents truth.

Normalization best practices:

- Standardize company name variants: Create rules to convert “ABC Company Inc.” and “ABC Company, Incorporated” and “ABC Co.” to a canonical form. Strip legal suffixes (Inc, LLC, Ltd) into separate fields for matching purposes while preserving original names for display.

- Parse addresses into components: Break addresses into street number, street name, unit/suite, city, state, and ZIP fields. Use USPS address validation APIs to correct misspellings and standardize abbreviations (Street vs St, Avenue vs Ave).

- Format phone numbers consistently: Convert all phone numbers to E.164 international format (+1XXXXXXXXXX for U.S.) for storage, even if displaying in national format (XXX-XXX-XXXX) in user interfaces.

- Categorize using standard taxonomies: Map varied industry descriptions to standard NAICS codes. This enables apples-to-apples comparisons across datasets that use different classification schemes.

Deduplication requires fuzzy matching since exact duplicates are rare. Businesses change names slightly, move addresses, or appear in different sources with variations. Implement multi-pass deduplication:

- Pass 1 – Exact matching: Flag records with identical company name and ZIP code as likely duplicates.

- Pass 2 – Fuzzy name matching: Use string similarity algorithms (Levenshtein distance, Jaro-Winkler) to identify similar names at the same address.

- Pass 3 – Phone/email matching: Records sharing phone numbers or email addresses are probably the same entity, even if names differ (catches business name changes).

- Manual review of ambiguous cases: Flag potential duplicates with 70-90% confidence for human review rather than auto-merging and risking false positives.

When merging duplicate records, establish precedence rules: most recently updated data takes priority, or trusted sources (direct from company website) override less reliable sources (aggregated directories). Track merge history so you can audit decisions later.

Enrichment fills gaps in your baseline data by appending information from additional sources. Common enrichment strategies include geocoding addresses to add latitude/longitude, appending employee counts and revenue estimates from business intelligence databases, adding technographic data (what software companies use) from web crawls, and supplementing with social media profiles and review counts.

Practical Ethics and Risk Mitigation for Directory Data Projects

Beyond legal compliance, ethical data handling builds trust with customers and protects your organization from reputational damage. The court of public opinion doesn’t care about technical legal compliance if your data practices feel invasive or exploitative. Building ethical guardrails demonstrates corporate responsibility and reduces regulatory scrutiny.

The organizations I respect most treat data ethics as a competitive differentiator rather than a constraint. They ask not just “can we do this legally?” but “should we do this, and would we want others to know we did it?”

Privacy, Consent, and PII Considerations

Business directory information sits in a gray area between clearly public data (like business names and addresses) and personal information that triggers privacy obligations. How you navigate this boundary determines your regulatory exposure and ethical standing.

Key privacy considerations for business directories data:

- Distinguish business vs. personal contact information: A company’s main phone number and general email (info@company.com) are business data. Named employee contacts, personal email addresses, and direct dial numbers may constitute personal information subject to privacy laws.

- Assess lawful basis for processing personal data: Under GDPR and similar frameworks, you need a legal justification to process personal information. Legitimate interest works for B2B marketing to business contacts in their professional capacity, but consent is safer for sensitive uses.

- Honor opt-out and suppression requests: Even when not legally required, providing easy opt-out mechanisms demonstrates respect for individual preferences. Maintain suppression lists and scrub them against all data before use.

- Implement data subject access rights: Be prepared to respond to requests to know what data you hold about individuals, correct inaccuracies, or delete records. This requires maintaining data lineage (knowing which systems contain what data).

- Apply purpose limitation principles: Collect data for specific, stated purposes and don’t repurpose it for unrelated uses without additional justification. Marketing data shouldn’t be used for employment screening without clear disclosure.

I consulted for a company that purchased business directories data for sales prospecting, then used it to screen potential hires by checking whether they worked for competitors. This secondary use hadn’t been disclosed and arguably violated purpose limitation principles, creating unnecessary legal exposure. The lesson? Define and document intended uses upfront, and stick to them.

Security, Storage, and Incident Response

Business directories data may seem low-risk compared to financial or health information, but breaches still create competitive intelligence leaks, regulatory penalties (if personal data is included), and reputational damage. Securing data stores and having incident response plans isn’t paranoia—it’s basic operational hygiene.

Essential security measures include:

- Encryption at rest and in transit: Database encryption prevents unauthorized access even if storage media is compromised. TLS/SSL for all data transfers protects against interception.

- Role-based access controls: Limit data access to employees with legitimate business needs. Sales teams need contact information, but shouldn’t access complete raw datasets or export tools.

- Audit logging: Track who accessed what data when, creating accountability and enabling forensic investigation if breaches occur.

- Regular security assessments: Penetration testing and vulnerability scanning identify weaknesses before attackers exploit them.

- Vendor security reviews: If using cloud storage or third-party data processors, verify they maintain appropriate security certifications (SOC 2, ISO 27001) and contractual data protection obligations.

Incident response planning prepares your organization to react quickly when breaches occur, minimizing damage and demonstrating due diligence to regulators. Your plan should define detection mechanisms (how you’ll know a breach happened), containment procedures (isolating affected systems), notification obligations (who must be informed and within what timeframe), and remediation steps (fixing vulnerabilities that enabled the breach).

For platforms managing business directory data, understanding how to organize active directory for business environment helps implement proper access controls and user management. If you’re building your own directory platform, solutions like TurnKey Directories provide WordPress-based infrastructure with built-in security features and compliance-friendly data handling.

Frequently Asked Questions

What is business directory data and how is it used in market intelligence?

Business directory data includes company names, addresses, phone numbers, industry classifications, employee counts, and contact details compiled into searchable databases. Organizations use it for sales prospecting, market segmentation, competitive analysis, territory planning, and identifying partnership opportunities. Quality directory data enables targeted outreach and informed strategic decisions.

Is it legal to scrape business directory data from public websites?

Scraping publicly accessible business information is generally legal, but you must respect website terms of service and robots.txt files. Violating explicit prohibitions in terms of service can create breach of contract liability. Always check these policies before scraping, implement rate limiting to avoid server harm, and consult legal counsel for high-value or sensitive projects.

When should I use an API instead of web scraping for business data?

APIs are preferable when you need mission-critical reliability, clear usage rights, structured data formats, and automated updates. They eliminate legal ambiguity and technical brittleness inherent in scraping. Choose APIs for production systems powering sales or marketing. Scraping works for one-time research projects, niche sources without APIs, or cost-constrained scenarios where occasional failures are acceptable.

How often should directory data be updated to stay current?

Business data decays 25-30% annually due to company closures, relocations, personnel changes, and phone number reassignments. High-touch sales operations should refresh quarterly, while less time-sensitive uses can update semi-annually. Monitor bounce rates and connection success rates to determine optimal refresh frequency for your specific use case and budget.

What are common data quality issues in directory datasets and how can I fix them?

Common issues include duplicate records with slight variations, incomplete or missing fields, outdated contact information, inconsistent formatting, and incorrect categorization. Fix them through automated validation rules, fuzzy matching deduplication, phone and email verification services, address standardization APIs, and manual review of high-value records. Establish quality thresholds and quarantine records below standards.

How can I validate a scraped dataset for accuracy and completeness?

Validate by randomly sampling 100-200 records and manually verifying them against original sources. Check completeness by measuring percentage of populated fields across required attributes. Compare record counts against known business population estimates for your target geography or industry. Cross-reference against authoritative sources like Secretary of State databases to verify company existence.

What are best practices to avoid getting blocked while scraping directories?

Implement delays between requests (minimum 1-2 seconds), rotate IP addresses using residential proxies, randomize user agents, respect robots.txt directives, scrape during off-peak hours, and monitor for rate limit errors to back off when detected. Identify your scraper in the user agent string for transparency, and never overwhelm small sites with aggressive request volumes.

How do I handle personal data in directory datasets under GDPR and CCPA?

Distinguish business information from personal data (named employee contacts). Establish lawful basis for processing personal data, typically legitimate interest for B2B marketing. Implement opt-out mechanisms, honor suppression requests, and be prepared to fulfill data subject access requests. Document your legal basis and maintain records of processing activities as required by regulations.

What licensing considerations should I be aware of when using third-party directory data?

Review whether the license permits your intended use (internal analysis, marketing, resale). Check restrictions on data sharing with third parties, export volumes, and integration with competitor services. Verify data freshness guarantees and update frequency. Get vendor warranties that they obtained data legally and have rights to sublicense it. Document all terms in writing.

How can I merge data from multiple sources without creating duplicates?

Use multi-pass fuzzy matching on company name plus location, phone number, or email address. Implement confidence scoring (0-100%) for potential matches and auto-merge high-confidence matches while flagging medium-confidence pairs for manual review. Establish precedence rules for conflicting data (most recent update wins, or trusted source priority). Track merge history for audit trails.

Building Sustainable Business Directory Data Operations

Downloading business directories data successfully requires balancing technical execution, legal compliance, and quality management in equal measure. The organizations that extract maximum value from directory data treat it as a strategic asset requiring ongoing investment rather than a one-time acquisition.

Your path forward depends on your specific constraints and objectives. If you have budget and need comprehensive coverage with legal certainty, licensed API providers deliver the most reliable foundation. If you’re targeting niche markets where public sources provide adequate coverage, disciplined scraping with strong compliance guardrails offers a cost-effective alternative. Most sophisticated operations combine both approaches—licensing baseline data for core needs while supplementing with targeted scraping for specialized gaps.

Start Your Data Project Right

Transform raw business information into competitive advantage:

- Audit current data quality and document gaps in coverage or freshness

- Define specific use cases and success metrics before acquiring new data

- Establish compliance framework with documented policies and source tracking

- Implement validation workflows that catch quality issues before data enters production systems

- Schedule regular refreshes to combat natural data decay and maintain accuracy

Remember that the highest-quality data means nothing without proper governance and ethical handling. Build compliance documentation from day one, implement security controls appropriate to your data sensitivity, and treat privacy obligations seriously even when not legally mandatory. Your reputation for responsible data stewardship becomes a competitive advantage when regulatory scrutiny intensifies.

The most successful data operations I’ve observed share common traits: they invest heavily in quality processes rather than just acquisition, they document everything for audit trails and institutional knowledge, they treat data as living assets requiring maintenance rather than static purchases, and they balance automation with human oversight for critical decisions.

Start small and prove value before scaling—a pilot project with 5,000 high-quality records that drives measurable results builds organizational support for expanding to comprehensive datasets. Track metrics like lead conversion rates, campaign response rates, and research efficiency gains to demonstrate ROI and justify continued investment.

Whether you’re building your first business directory or optimizing existing data operations, the principles remain constant: prioritize quality over quantity, compliance over convenience, and sustainable processes over quick wins. The data ecosystem will continue evolving, with new regulations, technical capabilities, and business models emerging, but organizations grounded in these fundamentals will adapt successfully while competitors scramble to retrofit compliance into broken workflows.