How to Build an Online Directory: 7 Essential Steps for Success

Generating summary...

Building an online directory isn’t just about creating another website—it’s about architecting a discovery engine that connects people with exactly what they’re searching for. Whether someone’s hunting for a trusted local plumber at 11 PM or researching boutique wedding photographers across three states, your directory becomes the bridge between need and solution. The beauty of this business model? It scales beautifully, generates multiple revenue streams, and solves real problems for both searchers and service providers.

Here’s something most guides won’t tell you upfront: the directories that thrive aren’t the ones with the most listings—they’re the ones with the most relevant listings and the clearest value proposition. I’ve watched aspiring directory owners spend months collecting thousands of generic business listings, only to launch to crickets because they never defined who they were serving or why anyone should care. The successful approach flips this entirely, you start with a razor-sharp niche, validate actual demand, then build systematically around that core insight.

TL;DR – Quick Takeaways

- Niche specificity wins – Broad directories compete with Yelp and Google; focused directories dominate specific markets

- Data quality over quantity – 200 verified, active listings outperform 2,000 stale entries every time

- Multiple revenue streams – Featured placements, memberships, ads, and lead-gen create sustainable income

- SEO architecture matters from day one – Location pages, schema markup, and clean URLs drive organic discovery

- Launch lean, iterate fast – Start with core features, validate with real users, expand based on data

- Directory vs. marketplace – Directories connect; marketplaces transact (choose your model deliberately)

Step 1: Define a Clear Niche and Value Proposition

The number one mistake new directory builders make? Trying to be everything to everyone. When you create “another business directory,” you’re competing head-on with billion-dollar platforms that have decade-long head starts. But when you create “the definitive directory for certified organic bakeries in the Pacific Northwest” or “the only curated list of LGBTQ+-friendly mental health providers,” you’ve carved out defensible territory.

Niche specificity improves trust because it signals expertise and curation. It boosts relevance because every listing matters to your target audience. And it drives conversion because visitors arrive with clear intent already aligned with your offerings. The best company directory models start by solving one problem exceptionally well before expanding horizontally.

How to Validate Your Niche Before Building

Enthusiasm doesn’t equal demand (I learned this the hard way with a pet project that excited exactly three people, including my mom). Before you commit months to development, run these validation exercises:

- Competitor analysis: Search for existing directories in your space. If you find none, that’s either brilliant or concerning. Often it means someone tried and failed, or the market’s too small. Finding 2-3 competitors actually validates demand.

- Keyword research: Use tools to check monthly search volume for terms like “[your niche] near me” or “best [your niche] in [location].” You want hundreds or thousands of monthly searches, not dozens.

- Audience interviews: Talk to 10-15 potential users. Ask about their current search process, frustrations, and whether they’d pay for better discovery. If half say “I just use Google,” dig deeper into what Google isn’t giving them.

- Provider interviews: Contact businesses you’d list. Would they claim a free profile? Would they pay for premium placement? What features would actually help them get customers?

Examples of Successful Directory Niches

Real-world directories that thrive focus on specific verticals with clear user intent. Local service directories work when they go deep on a metro area or specialize by trade (think “licensed electricians” not “all contractors”). Real estate portals succeed by focusing on property types or neighborhoods. Event directories win by curating specific event categories—wedding venues, conference spaces, or food festivals rather than “all events everywhere.”

The pattern? Each successful niche has built-in search behavior, users already hunting for these services or resources, plus identifiable providers willing to pay for visibility. That combination creates a sustainable foundation for everything that follows.

Step 2: Plan the Directory Structure and Data Model

Your directory’s taxonomy is its skeleton—get this wrong and every future feature becomes painful to implement. The goal is creating an intuitive organizational structure that mirrors how real people actually search while remaining flexible enough to accommodate growth.

Designing an Effective Taxonomy

Start with 5-12 top-level categories that represent your niche’s major segments. Under each, create 3-10 subcategories. For example, a home services directory might have “Plumbing” as a category with subcategories like “Emergency Plumbing,” “Drain Cleaning,” “Water Heater Installation,” and “Pipe Repair.” This structure serves both SEO (creating distinct landing pages) and user experience (intuitive browsing).

Location hierarchies follow a similar principle. For local directories, structure might be Country > State/Region > City > Neighborhood. For national directories with sparse listings, you might skip neighborhoods and go straight to cities. The key is matching granularity to your listing density, don’t create 50 neighborhood pages if you only have 30 total listings.

Tags offer flexible cross-categorization. A wedding photographer might be tagged “outdoor,” “destination,” “LGBTQ+ friendly,” and “available for travel.” Tags create discovery paths beyond rigid categories, helping users find exactly what they need through multiple entry points.

Data Collection and Verification Workflows

You have three primary data acquisition strategies, each with tradeoffs:

- Manual curation: You research and add listings yourself. Pros: highest quality, complete information, accurate from day one. Cons: doesn’t scale, time-intensive, can’t grow faster than your research capacity.

- Partnership programs: Work with industry associations, chambers of commerce, or franchise networks for bulk listing access. Pros: quick initial population, pre-verified providers. Cons: may include inactive businesses, requires negotiation and often revenue sharing.

- Self-submission with validation: Let businesses claim profiles or submit listings, then verify before publishing. Pros: scales infinitely, keeps data current, businesses invested in accuracy. Cons: requires moderation systems, quality varies, spam submissions inevitable.

Most successful directories use a hybrid approach: seed the platform with curated core listings to establish credibility, then open self-submission to scale while maintaining approval gates. This balances growth velocity with quality control, which helps maintain the value proposition for users browsing your local businesses directory page.

| Data Field | Required? | Purpose |

|---|---|---|

| Business Name | Yes | Core identification |

| Category | Yes | Taxonomy placement |

| Location/Address | Yes | Local SEO, maps |

| Phone | Recommended | Contact, lead tracking |

| Website URL | Recommended | Verification, user value |

| Hours | Optional | User convenience |

| Description | Optional | SEO, user information |

| Photos | Optional | Engagement, premium upsell |

Directory vs. Marketplace: A Critical Distinction

Before you go further, clarify whether you’re building a directory or a marketplace—they’re fundamentally different beasts. A directory connects users with providers; transactions happen off-platform (think Yelp or Yellow Pages). A marketplace facilitates transactions directly (think Airbnb or Upwork). Directories monetize through visibility (paid listings, ads), while marketplaces monetize through transaction fees or commissions.

Most entrepreneurs find directories simpler to launch because you don’t need payment processing, escrow systems, dispute resolution, or transaction insurance. You’re purely in the information and discovery business. Marketplaces offer higher revenue per user but require significantly more complex infrastructure and legal considerations. Choose deliberately based on your niche, resources, and long-term vision.

Step 3: Choose the Right Technology and Platform

The technology decision shapes everything from your launch timeline to your ongoing operational costs. The good news? You have more viable options now than ever before. The challenge? Each path involves meaningful tradeoffs.

Platform Evaluation Criteria

When comparing options, weigh these factors against your specific situation:

- Scalability: Can the platform handle 100 listings today and 100,000 tomorrow without a complete rebuild?

- Ease of use: Will you spend weeks learning complex systems or can you launch quickly?

- Integration ecosystem: Does it connect with payment processors, email tools, analytics, and CRM systems you need?

- Listing features: Does it support the specific fields, media types, and functionality your niche requires?

- Developer support: When things break or you need customization, is help available and affordable?

No-Code/DIY Options vs. Custom Development

No-code directory builders and WordPress plugins offer the fastest path to launch. Platforms like Directorist, GeoDirectory, or Brilliant Directories provide pre-built directory functionality you can customize through settings and templates. You can launch in days or weeks rather than months, often for under $500 initial investment. The limitation? You’re constrained by the platform’s capabilities and design flexibility.

Custom development gives unlimited flexibility but requires significant investment—typically $10,000 minimum for even basic custom builds, and easily $50,000+ for sophisticated platforms. You control every pixel and feature, but you also own every bug and maintenance headache. For most first-time directory builders, custom development is overkill. Start with no-code or low-code solutions, prove the business model, then invest in custom development if you hit platform limitations.

Building Monetization-Ready Architecture

The platform should natively support or easily integrate these revenue-critical features:

- Multiple listing tiers (free, basic paid, premium) with different feature sets

- Membership subscriptions with recurring billing

- Featured listing placement and sponsored results

- Banner ad zones and ad management

- Lead capture forms with analytics tracking

- Claim and upgrade flows for business owners

Test these workflows during platform evaluation—don’t just assume they work. Create a test listing, upgrade it to premium, process a payment, then downgrade it. If this basic flow feels clunky or requires workarounds, imagine the friction for real customers.

Step 4: Build Foundational SEO and Content Strategy

A directory without traffic is just an empty database. SEO drives the compounding growth that makes directories valuable over time—each new listing potentially creates a new indexed page, each category page targets additional keywords, each location page captures local search intent.

SEO-Driven Architecture Fundamentals

Clean URL structures matter immensely for directories. Instead of “yourdirectory.com/listing?id=12345,” aim for “yourdirectory.com/plumbing/seattle/joes-plumbing.” This readable, hierarchical structure helps search engines understand your content and helps users trust the links they click. It also creates natural keyword inclusion without stuffing—the URL itself signals relevance.

Schema markup tells search engines exactly what your pages contain. For directories, implement LocalBusiness schema for individual listings, BreadcrumbList schema for category navigation, and AggregateRating schema if you include reviews. This structured data helps your listings appear in rich results and knowledge panels, dramatically improving click-through rates from search results.

Category and location landing pages are your SEO workhorses. Each should include:

- Original, helpful content (150-500 words) explaining the category or location context

- Natural keyword inclusion without over-optimization

- Unique value beyond just listing links—tips, considerations, or local insights

- Related category links to distribute PageRank throughout your site

Content Strategy for Local and “Near Me” Visibility

Hub pages for major categories and locations create topical authority. A home services directory might create comprehensive guides like “Complete Guide to Hiring Plumbers in Seattle” that links to relevant listings while providing educational content. These hub pages target broader informational keywords and establish your directory as an authoritative resource, not just a link farm.

Local SEO content captures “near me” searches and location-specific queries. For each major location you serve, create pages that address local considerations, regulations, average pricing, and common questions. Someone searching “wedding photographer near me” in Austin has different considerations than someone in rural Montana—your content should reflect this understanding and connect it to your curated business phone directories.

Technical SEO Priorities

Beyond content and structure, ensure your directory handles these technical fundamentals:

- Mobile-responsive design (most local searches happen on mobile)

- Fast page load speeds (especially important for listing pages with images)

- XML sitemaps that update automatically as you add listings

- Pagination handling for category pages with hundreds of listings

- Canonical tags to prevent duplicate content issues across filtered views

- HTTPS security (non-negotiable for user trust and search rankings)

Step 5: Data Acquisition, Verification, and Compliance

A directory is only as valuable as its data quality. Ten perfect listings beat a hundred mediocre ones when users are trying to make decisions. The challenge is maintaining quality while achieving the coverage necessary to be useful.

Balancing Breadth and Depth

Early-stage directories face a chicken-and-egg problem: users won’t visit without listings, businesses won’t claim listings without users. The solution is strategic seeding with high-quality core listings that make your directory immediately useful even with limited coverage. Focus on the most-searched categories and highest-value locations first rather than trying to achieve complete coverage everywhere.

For a local services directory in Portland, you might start with 50 carefully curated plumbers, electricians, and HVAC contractors—the most-searched trades—rather than 300 random businesses across every possible category. This focused approach creates genuine utility faster and establishes quality standards from day one.

Verification Methods That Scale

Different verification strategies suit different growth stages:

- Manual approval: Every submission goes through human review before publishing. Ideal when you’re small and establishing standards. Doesn’t scale beyond a few submissions daily.

- Automated validation: Check submitted phone numbers and addresses against databases, verify websites resolve and match business names, flag suspicious patterns. Filters obvious spam while allowing legitimate listings through quickly.

- Community reporting: Let users flag outdated or incorrect information. Implement a “report this listing” feature and respond quickly to reports. Crowdsourced quality control that scales with your user base.

- Periodic re-verification: Send automated emails every 6-12 months asking businesses to confirm their information is current. Mark unresponsive listings as “Not Recently Verified” to signal potential staleness to users.

The best systems combine these approaches: automated checks catch obvious problems, human review handles edge cases and disputes, and community reporting provides ongoing maintenance at scale.

Privacy and Compliance Considerations

Directory operators walk a line between public information aggregation and privacy protection. Establish clear policies about what information you collect, how you use it, and who can access it. For self-submitted listings, implement explicit opt-in rather than assuming consent.

Consider these compliance essentials:

- Clear privacy policy explaining data handling

- Terms of service addressing listing accuracy responsibilities

- Opt-out mechanisms for businesses that don’t want to be listed

- GDPR compliance if serving European users (even indirectly)

- CAN-SPAM compliance for any email outreach

- Do Not Call considerations if tracking phone leads

Legal compliance isn’t optional, and ignorance provides no protection. Consult with an attorney familiar with online platforms in your jurisdiction to establish appropriate policies before launching.

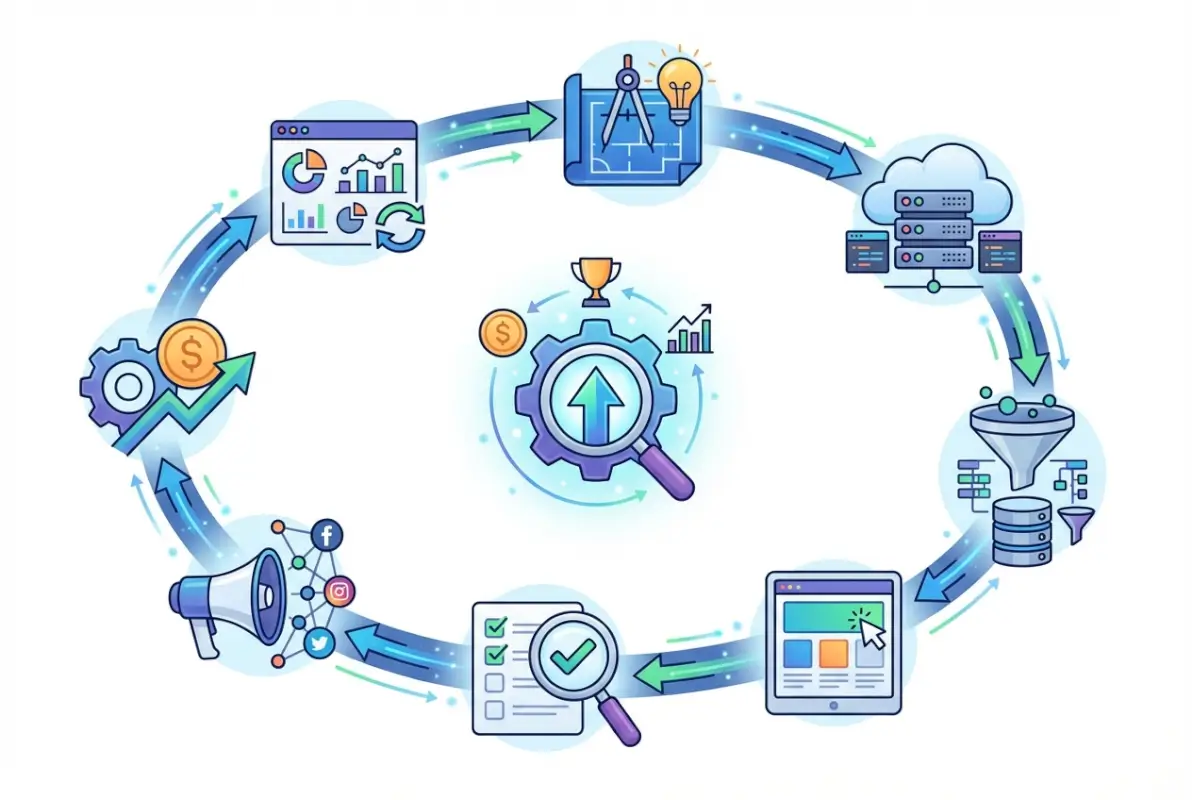

Step 6: Monetization Strategy and Pricing Models

The best time to plan monetization is before you build anything. Revenue models shape feature prioritization, user experience, and growth strategies. Retrofitting monetization into a directory designed for free listings creates friction and user resistance.

Core Revenue Streams for Directories

Successful directories typically combine multiple revenue sources rather than relying on a single stream:

Featured/Paid Listings: Businesses pay for enhanced placement at the top of category pages, highlighted design, or premium badges. This works because better visibility directly drives more customer inquiries. Pricing might range from $25-$200/month depending on niche competitiveness and the traffic you deliver.

Tiered Memberships: Create free, basic paid, and premium tiers with escalating features. Free might include just name, category, and location. Basic paid ($30-$50/month) adds photos, full description, website link, and social media. Premium ($100-$200/month) includes all basic features plus featured placement, enhanced analytics, and priority support. For more structured environments, you can even integrate Active Directory integration concepts.

Display Advertising: Once you reach meaningful traffic (typically 10,000+ monthly visitors), display ads become viable. Banner placements on category pages and listings can generate $500-$5,000+ monthly depending on traffic and niche. Programmatic ad networks make this relatively hands-off.

Lead Generation: Charge per qualified lead or inquiry sent to businesses. This performance-based model aligns your revenue with actual customer value. Common in high-ticket niches like contractors, legal services, or real estate. Pricing might be $10-$100+ per lead depending on service value.

Affiliate Commissions: For certain niches, affiliate relationships with related products or services create additional income. A wedding venue directory might earn commissions from photographer booking platforms or catering marketplaces.

| Revenue Stream | Best For | Typical Pricing |

|---|---|---|

| Featured Listings | Competitive categories | $25-$200/month |

| Basic Membership | Enhanced profiles | $30-$75/month |

| Premium Membership | Top visibility | $100-$300/month |

| Display Ads | High-traffic sites | $500-$5,000/month |

| Lead Generation | High-ticket services | $10-$100/lead |

| Affiliate Commissions | Related products | 5-20% commission |

Pricing Without Alienating Listings

The mistake many directory owners make is charging too much too soon or making the free tier so limited it provides no value. Your free tier should be genuinely useful—enough so that a business sees value in having the listing even without paying. This creates the network effect that makes your directory useful to searchers.

Paid tiers then offer clear, tangible upgrades that businesses can directly connect to customer acquisition. “Get three photos and a logo” is less compelling than “Appear in the top three results for your category” because one is about features, the other about outcomes.

Test pricing through limited-time promotional tiers. Launch with introductory pricing ($20/month instead of $50/month) for the first 50 premium members. This creates urgency, provides validation at multiple price points, and establishes a baseline of paying customers you can survey about willingness to pay more for additional features.

Step 7: Launch, Growth, and Scale Tactics

Launch day is just the beginning. The real work happens in the weeks and months after when you’re systematically driving traffic, acquiring listings, and optimizing conversion funnels.

Launch Plan Essentials

Soft launch to a limited audience first—friends, industry connections, a small email list, or a specific geographic area. This controlled rollout lets you identify broken workflows, confusing user interfaces, and technical issues without embarrassing yourself in front of your entire market. Gather feedback aggressively through user interviews and observation (watch someone use your directory without helping them, you’ll learn more in 10 minutes than from 100 survey responses).

Public launch comes after you’ve addressed critical feedback and have enough quality listings to deliver genuine value. Aim for at least 50-100 core listings in your most important categories. Announce through press releases to local media, industry publications, and online communities your target audience frequents. Create launch content that explains your unique value proposition—why you exist and why people should use you instead of alternatives.

Growth Engines to Activate

Directory growth requires two parallel efforts: acquiring listings and acquiring users. Neither works without the other.

Listing Owner Acquisition: Reach out directly to high-value businesses and offer free premium listings in exchange for feedback during your beta period. This seeds your directory with quality while building relationships with providers who may pay later. Create simple claim flows that let businesses verify ownership and upgrade their free listings with minimal friction. Send automated “claim your listing” emails to businesses you’ve added, framing it as an opportunity to control their information rather than a sales pitch.

User Acquisition: SEO provides the long-term growth engine, but takes 3-6 months to gain traction. In the meantime, leverage content marketing by creating helpful guides related to your niche that link back to your directory. Build relationships with complementary websites and secure backlinks. Participate genuinely in online communities where your target audience gathers—answer questions and provide value before ever mentioning your directory. When you do share it, frame it as a resource that solves the specific problem being discussed.

Content and SEO Momentum: Publish new category pages, location pages, and helpful content consistently. Search engines reward fresh, growing sites with more frequent crawling and faster ranking improvements. Aim for 2-4 new high-quality pages weekly during your first six months. Track rankings for your target keywords and double down on what’s working.

Common Pitfalls and How to Avoid Them

I’ve seen promising directories fail because founders spent six months perfecting features no one needed while ignoring the basics. Launch with core functionality and expand based on actual user behavior and requests. Don’t build a mobile app before you’ve proven the web version. Don’t add advanced filtering before you have enough listings to make filtering useful.

Another common mistake is neglecting existing listings while chasing new ones. Stale, outdated information destroys trust faster than incomplete coverage. Better to have 200 current, verified listings than 2,000 listings where 30% have disconnected phone numbers.

Finally, many directory owners give up right before SEO traction arrives. Organic growth compounds slowly at first—you might see only 50 visitors in month one, 100 in month two, 200 in month three. Then suddenly month six brings 2,000 visitors and month twelve brings 20,000. The curve is exponential, not linear, but you have to survive the early slow months to reach the hockey stick.

Additional Considerations: Competitive Landscape and Case Studies

Understanding what’s already working in the directory space provides valuable pattern recognition. You’re not looking to copy existing directories but to identify principles you can adapt to your niche.

Local business directories like Yelp and Angie’s List succeeded by combining comprehensive coverage with user reviews and ratings. The lesson? Social proof and quality signals matter enormously in categories where trust is essential. Even if you don’t implement full review systems initially, plan for them.

Real estate portals like Zillow and Realtor.com won by providing search functionality more sophisticated than individual agent websites could offer—map-based browsing, detailed filtering, and comprehensive data. The lesson? Your directory should provide discovery capabilities individual providers can’t replicate alone.

Job boards like Indeed and LinkedIn demonstrate the power of both sides of the marketplace actively engaging—employers posting jobs and candidates creating profiles. The lesson? Consider how to activate both sides of your directory. Maybe businesses can post special offers or availability to incentivize return visits from users.

Freelancer marketplaces like Upwork and Fiverr blur the line between directory and marketplace by facilitating transactions but still essentially connecting service seekers with providers. The lesson? You might start as a pure directory but evolution toward transaction facilitation often increases platform value and monetization opportunity.

Event directories succeed by emphasizing timeliness—new content constantly appears as events are added. The lesson? If your niche has temporal elements (seasonal services, limited-time availability), highlight freshness and urgency.

Alternative Paths and Platform Recommendations

For non-developers who want to launch quickly, directory-specific platforms like Directorist (WordPress plugin), Brilliant Directories (standalone platform), or GeoDirectory (WordPress plugin) provide turnkey functionality. These typically cost $100-$500 for the software plus hosting, and you can launch in 1-2 weeks with no coding.

If you have technical skills or budget for development, modern JavaScript frameworks like React or Vue paired with headless CMSs or custom databases offer maximum flexibility. This path costs significantly more ($10,000+ in development) but gives you complete control over features, user experience, and scalability.

The middle ground—no-code platforms like Webflow, Bubble, or Airtable combined with specialized directory templates—offers more customization than plugins but less complexity than custom code. Expect 4-6 weeks to launch and costs around $500-$2,000 for setup plus monthly platform fees.

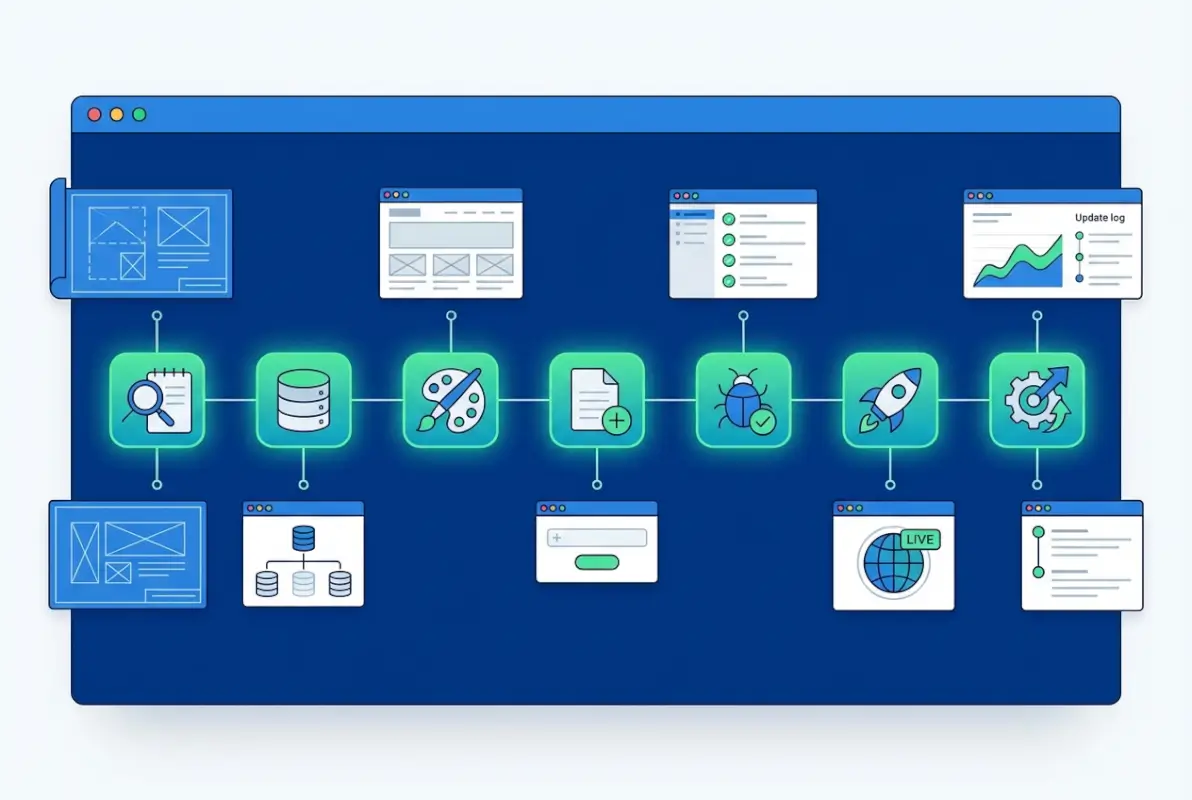

Phased Feature Rollout Strategy

Launch with these essentials in phase one (weeks 1-4):

- Basic listing pages with core fields

- Category and location browsing

- Simple search functionality

- Mobile-responsive design

- Contact forms or click-to-call buttons

Add in phase two (months 2-3):

- Claim and upgrade flows for businesses

- Payment processing for premium listings

- Enhanced listing features (photos, videos, hours)

- Email notifications for new listings in saved categories

Expand in phase three (months 4-6):

- User accounts and saved favorites

- Review and rating systems

- Advanced search filters

- Featured listings and ad placements

- Analytics dashboards for paying businesses

This phased approach gets you to market fast while continuously improving based on real usage patterns, which is far smarter than spending six months building features in isolation.

Practical Checklists and Templates

Essential Listing Fields Template

Use this schema as your starting point, then customize for your niche:

- Core identification: Business name, DBA, category (primary + secondary), tags

- Location: Address, city, state/region, zip, country, latitude/longitude

- Contact: Phone, email, website, social media links

- Description: Short summary (160 chars), full description (500-1000 words)

- Media: Logo, photos (3-10), videos, virtual tour link

- Operations: Hours, service area, languages, payment methods

- Credentials: Licenses, certifications, insurance, years in business

- Special features: Emergency availability, free consultation, senior discount, etc.

- Metadata: Date added, last verified, listing status, upgrade tier

SEO-Friendly URL Structure Template

Follow these patterns for maximum SEO benefit:

- Category pages:

/category-name/(e.g., /plumbing/) - Location pages:

/location-name/(e.g., /seattle/) - Category + location:

/category/location/(e.g., /plumbing/seattle/) - Individual listings:

/category/location/business-name/(e.g., /plumbing/seattle/joes-plumbing/)

Pricing and Feature Matrix Template

| Feature | Free | Basic ($30/mo) | Premium ($100/mo) |

|---|---|---|---|

| Basic listing | ✓ | ✓ | ✓ |

| Photos (up to 3) | — | ✓ | ✓ (up to 10) |

| Full description | — | ✓ | ✓ |

| Featured placement | — | — | ✓ |

| Analytics dashboard | — | — | ✓ |

| Lead notifications | — | ✓ | ✓ (priority) |

Frequently Asked Questions

What is an online directory and how does it work?

An online directory is a curated database of businesses, services, or resources organized by categories and locations, helping users discover relevant options. Directories work by collecting listing information, organizing it into searchable structures, then presenting it through browsing or search interfaces. Users find what they need, businesses gain visibility, and directory owners monetize through paid placements or advertising.

How do I choose a niche for my directory?

Choose a niche by identifying underserved markets where existing solutions are inadequate. Look for categories with consistent search volume, identifiable provider bases willing to pay for visibility, and clear user intent. Validate through competitor analysis, keyword research showing hundreds or thousands of monthly searches, and interviews with potential users and providers before committing to development.

What features should a new directory launch with?

Launch with basic listing pages containing essential fields, category and location browsing, simple search, mobile-responsive design, and contact mechanisms like forms or click-to-call buttons. Avoid feature bloat initially—prove core value first. Add claim flows, payment processing, enhanced listings, and advanced features in phases 2-3 based on actual user feedback and behavior patterns.

How can I monetize a directory without turning away listings?

Offer a genuinely useful free tier so businesses see value in baseline listings, then provide clear upgrades that deliver tangible outcomes like top placement or enhanced visibility. Use tiered pricing with transparent feature differences. Start with introductory pricing to build a base of paying customers, then adjust based on willingness-to-pay data gathered from actual users rather than assumptions.

How do I validate listing data and maintain quality?

Combine automated validation checking phone numbers and addresses against databases, manual review for edge cases, and community reporting where users flag problems. Implement periodic re-verification by contacting businesses every 6-12 months to confirm information currency. Display “last verified” dates to incentivize updates and build user trust through transparency about data freshness.

What is the difference between a directory and a marketplace?

Directories connect users with providers; transactions happen off-platform. Marketplaces facilitate transactions directly on-platform, handling payments, escrow, and fulfillment. Directories monetize through visibility (paid listings, ads) while marketplaces take transaction fees or commissions. Directories are simpler to launch but marketplaces can generate higher revenue per user with more complex infrastructure requirements.

Which platforms are best for building a directory with no code?

Directory-specific WordPress plugins like Directorist or GeoDirectory offer quick launches with $100-$500 investment plus hosting. Brilliant Directories provides standalone platform functionality. No-code tools like Webflow or Bubble with directory templates offer middle-ground customization. Each suits different needs—WordPress for content strength, standalone platforms for integrated features, no-code builders for design flexibility.

How can I drive traffic to a new directory site?

Build foundational SEO through clean URL structures, schema markup, and location-based content targeting local keywords. Create helpful guides and content that attract organic search traffic over 3-6 months. In the meantime, secure backlinks from related websites, participate genuinely in online communities, reach out to high-value listings directly, and leverage partnerships with complementary services.

How do I handle user privacy and listing approvals?

Establish clear privacy policies explaining data collection and use. For self-submitted listings, require explicit opt-in consent. Implement manual or automated approval workflows before listings go live to maintain quality. Provide opt-out mechanisms for businesses that don’t want inclusion. Ensure GDPR compliance if serving European users and follow CAN-SPAM rules for email outreach.

What are common SEO considerations for local directories?

Implement clean, hierarchical URL structures including location and category information. Use LocalBusiness schema markup for listings and BreadcrumbList for navigation. Create unique location and category landing pages with original content addressing local considerations. Optimize for “near me” searches through local-focused content. Ensure mobile responsiveness and fast load speeds since most local searches happen on mobile.

How should I price paid listings and memberships?

Research competitor pricing in your niche as a starting baseline. Test multiple tiers—typically free, basic paid ($30-$75/monthly), and premium ($100-$200+/monthly)—with clear feature differentiation. Focus tier benefits on outcomes like “appear first in results” rather than features like “add more photos.” Launch with promotional pricing for first adopters, gather willingness-to-pay data, then adjust based on conversion rates and user feedback.

Your Directory Journey Starts With Clarity

Building a successful online directory isn’t about having the most advanced technology or the biggest budget—it’s about understanding a specific audience’s needs and systematically addressing them better than alternatives. The seven-step framework we’ve covered provides the roadmap: define your niche precisely, plan your data structure thoughtfully, choose appropriate technology, build SEO foundations, maintain data quality rigorously, monetize sustainably, and launch with a clear growth plan.

The directories that thrive long-term share common characteristics: they solve real discovery problems, they maintain high data quality, they provide genuine value in free tiers while offering meaningful paid upgrades, and they continuously optimize based on user behavior rather than assumptions. You don’t need perfection at launch, you need a solid foundation and commitment to iteration.

Your next concrete steps? Validate your niche through keyword research and audience interviews this week. Sketch your taxonomy and essential listing fields on paper. Evaluate 2-3 platform options with actual hands-on testing. Then make a decision and start building—imperfect action beats perfect planning every time. The directory landscape continues evolving, but the fundamentals of curation, quality, and user value remain constant. Focus there and you’ll build something that matters.

Was this article helpful?